AWS Certified Machine Learning -Specialty (MLS-C01) Exam Learning Path

- Finally Re-certified the updated AWS Certified Machine Learning – Specialty (MLS-C01) certification exam after 3 months of preparation.

- In terms of the difficulty level of all professional and specialty certifications, I find this to be the toughest, partly because I am still diving deep into machine learning and relearned everything from basics for this certification.

- Machine Learning is a vast specialization in itself and with AWS services, there is a lot to cover and know for the exam. This is the only exam, where the majority of the focus is on concepts outside of AWS i.e. pure machine learning. It also includes AWS Machine Learning and Data Engineering services.

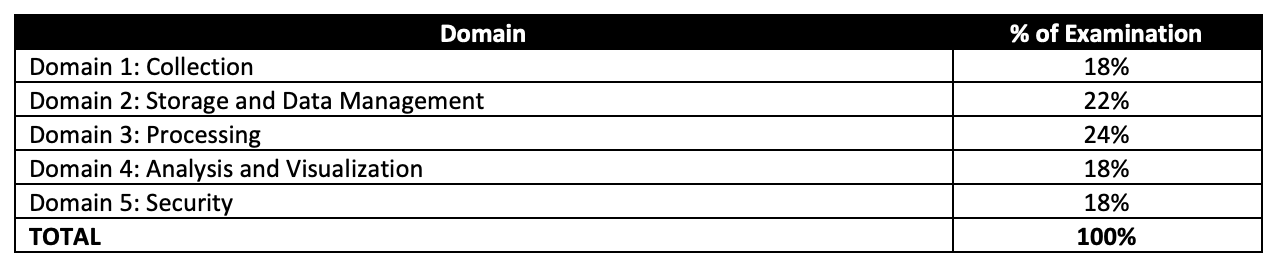

AWS Certified Machine Learning – Specialty (MLS-C01) Exam Content

- AWS Certified Machine Learning – Specialty (MLS-C01) exam validates

- Select and justify the appropriate ML approach for a given business problem.

- Identify appropriate AWS services to implement ML solutions.

- Design and implement scalable, cost-optimized, reliable, and secure ML solutions.

Refer AWS Certified Machine Learning – Specialty Exam Guide for details

AWS Certified Machine Learning – Specialty (MLS-C01) Exam Summary

-

Specialty exams are tough, lengthy, and tiresome. Most of the questions and answers options have a lot of prose and a lot of reading that needs to be done, so be sure you are prepared and manage your time well.

- MLS-C01 exam has 65 questions to be solved in 170 minutes which gives you roughly 2 1/2 minutes to attempt each question.

- MLS-C01 exam includes two types of questions, multiple-choice and multiple-response.

- MLS-C01 has a scaled score between 100 and 1,000. The scaled score needed to pass the exam is 750.

- Specialty exams currently cost $ 300 + tax.

- You can get an additional 30 minutes if English is your second language by requesting Exam Accommodations. It might not be needed for Associate exams but is helpful for Professional and Specialty ones.

- As always, mark the questions for review, move on, and come back to them after you are done with all.

- As always, having a rough architecture or mental picture of the setup helps focus on the areas that you need to improve. Trust me, you will be able to eliminate 2 answers for sure and then need to focus on only the other two. Read the other 2 answers to check the difference area and that would help you reach the right answer or at least have a 50% chance of getting it right.

- AWS exams can be taken either remotely or online, I prefer to take them online as it provides a lot of flexibility. Just make sure you have a proper place to take the exam with no disturbance and nothing around you.

- Also, if you are taking the AWS Online exam for the first time try to join at least 30 minutes before the actual time as I have had issues with both PSI and Pearson with long wait times.

AWS Certified Machine Learning – Specialty (MLS-C01) Exam Resources

- Online Courses

- Practice tests

AWS Certified Machine Learning – Specialty (MLS-C01) Exam Topics

- AWS Certified Machine Learning – Specialty exam covers a lot of Machine Learning concepts. It digs deep into Machine learning concepts, most of which are not related to AWS.

- AWS Certified Machine Learning – Speciality exam covers the E2E Machine Learning lifecycle, right from data collection, transformation, making it usable and efficient for Machine Learning, pre-processing data for Machine Learning, training and validation, and implementation.

Machine Learning Concepts

- Exploratory Data Analysis

- Feature selection and Engineering

- remove features that are not related to training

- remove features that have the same values, very low correlation, very little variance, or a lot of missing values

- Apply techniques like Principal Component Analysis (PCA) for dimensionality reduction i.e. reduce the number of features.

- Apply techniques such as One-hot encoding and label encoding to help convert strings to numeric values, which are easier to process.

- Apply Normalization i.e. values between 0 and 1 to handle data with large variance.

- Apply feature engineering for feature reduction e.g. using a single height/weight feature instead of both features.

- Handle Missing data

- remove the feature or rows with missing data

- impute using Mean/Median values – valid only for Numeric values and not categorical features also does not factor correlation between features

- impute using k-NN, Multivariate Imputation by Chained Equation (MICE), Deep Learning – more accurate and helps factors correlation between features

- Handle unbalanced data

- Source more data

- Oversample minority or Undersample majority

- Data augmentation using techniques like Synthetic Minority Oversampling Technique (SMOTE).

- Feature selection and Engineering

- Modeling

- Know about Algorithms – Supervised, Unsupervised and Reinforcement and which algorithm is best suitable based on the available data either labelled or unlabelled.

- Supervised learning trains on labeled data e.g. Linear regression. Logistic regression, Decision trees, Random Forests

- Unsupervised learning trains on unlabelled data e.g. PCA, SVD, K-means

- Reinforcement learning trained based on actions and rewards e.g. Q-Learning

- Hyperparameters

- are parameters exposed by machine learning algorithms that control how the underlying algorithm operates and their values affect the quality of the trained models

- some of the common hyperparameters are learning rate, batch, epoch (hint: If the learning rate is too large, the minimum slope might be missed and the graph would oscillate If the learning rate is too small, it requires too many steps which would take the process longer and is less efficient)

- Know about Algorithms – Supervised, Unsupervised and Reinforcement and which algorithm is best suitable based on the available data either labelled or unlabelled.

- Evaluation

- Know difference in evaluating model accuracy

- Use Area Under the (Receiver Operating Characteristic) Curve (AUC) for Binary classification

- Use root mean square error (RMSE) metric for regression

- Understand Confusion matrix

- A true positive is an outcome where the model correctly predicts the positive class. Similarly, a true negative is an outcome where the model correctly predicts the negative class.

- A false positive is an outcome where the model incorrectly predicts the positive class. A false negative is an outcome where the model incorrectly predicts the negative class.

- Recall or Sensitivity or TPR (True Positive Rate): Number of items correctly identified as positive out of total true positives- TP/(TP+FN) (hint: use this for cases like fraud detection, cost of marking non fraud as frauds is lower than marking fraud as non-frauds)

- Specificity or TNR (True Negative Rate): Number of items correctly identified as negative out of total negatives- TN/(TN+FP) (hint: use this for cases like videos for kids, the cost of dropping few valid videos is lower than showing few bad ones)

- Handle Overfitting problems

- Simplify the model, by reducing the number of layers

- Early Stopping – form of regularization while training a model with an iterative method, such as gradient descent

- Data Augmentation

- Regularization – technique to reduce the complexity of the model

- Dropout is a regularization technique that prevents overfitting

- Never train on test data

- Know difference in evaluating model accuracy

Machine Learning Services

- SageMaker

- supports both File mode, Pipe mode, and Fast File mode

- File mode loads all of the data from S3 to the training instance volumes VS Pipe mode streams data directly from S3

- File mode needs disk space to store both the final model artifacts and the full training dataset. VS Pipe mode which helps reduce the required size for EBS volumes.

- Fast File mode combines the ease of use of the existing File Mode with the performance of Pipe Mode.

- Using RecordIO format allows algorithms to take advantage of Pipe mode when training the algorithms that support it.

- supports Model tracking capability to manage up to thousands of machine learning model experiments

- supports automatic scaling for production variants. Automatic scaling dynamically adjusts the number of instances provisioned for a production variant in response to changes in your workload

- provides pre-built Docker images for its built-in algorithms and the supported deep learning frameworks used for training & inference

- SageMaker Automatic Model Tuning

- is the process of finding a set of hyperparameters for an algorithm that can yield an optimal model.

- Best practices

- limit the search to a smaller number as the difficulty of a hyperparameter tuning job depends primarily on the number of hyperparameters that Amazon SageMaker has to search

- DO NOT specify a very large range to cover every possible value for a hyperparameter as it affects the success of hyperparameter optimization.

- log-scaled hyperparameter can be converted to improve hyperparameter optimization.

- running one training job at a time achieves the best results with the least amount of compute time.

- Design distributed training jobs so that you get they report the objective metric that you want.

- know how to take advantage of multiple GPUs (hint: increase learning rate and batch size w.r.t to the increase in GPUs)

- Elastic Interface (now replaced by Inferentia) helps attach low-cost GPU-powered acceleration to EC2 and SageMaker instances or ECS tasks to reduce the cost of running deep learning inference.

- SageMaker Inference options.

- Real-time inference is ideal for online inferences that have low latency or high throughput requirements.

- Serverless Inference is ideal for intermittent or unpredictable traffic patterns as it manages all of the underlying infrastructure with no need to manage instances or scaling policies.

- Batch Transform is suitable for offline processing when large amounts of data are available upfront and you don’t need a persistent endpoint.

- Asynchronous Inference is ideal when you want to queue requests and have large payloads with long processing times.

- SageMaker Model deployment allows deploying multiple variants of a model to the same SageMaker endpoint to test new models without impacting the user experience

- Production Variants

- supports A/B or Canary testing where you can allocate a portion of the inference requests to each variant.

- helps compare production variants’ performance relative to each other.

- Shadow Variants

- replicates a portion of the inference requests that go to the production variant to the shadow variant.

- logs the responses of the shadow variant for comparison and not returned to the caller.

- helps test the performance of the shadow variant without exposing the caller to the response produced by the shadow variant.

- Production Variants

- SageMaker Managed Spot training can help use spot instances to save cost and with Checkpointing feature can save the state of ML models during training

- SageMaker Feature Store

- helps to create, share, and manage features for ML development.

- is a centralized store for features and associated metadata so features can be easily discovered and reused.

- SageMaker Debugger provides tools to debug training jobs and resolve problems such as overfitting, saturated activation functions, and vanishing gradients to improve the model’s performance.

- SageMaker Model Monitor monitors the quality of SageMaker machine learning models in production and can help set alerts that notify when there are deviations in the model quality.

- SageMaker Automatic Model Tuning helps find a set of hyperparameters for an algorithm that can yield an optimal model.

- SageMaker Data Wrangler

- reduces the time it takes to aggregate and prepare tabular and image data for ML from weeks to minutes.

- simplifies the process of data preparation (including data selection, cleansing, exploration, visualization, and processing at scale) and feature engineering.

- SageMaker Experiments is a capability of SageMaker that lets you create, manage, analyze, and compare machine learning experiments.

- SageMaker Clarify helps improve the ML models by detecting potential bias and helping to explain the predictions that the models make.

- SageMaker Model Governance is a framework that gives systematic visibility into ML model development, validation, and usage.

- SageMaker Autopilot is an automated machine learning (AutoML) feature set that automates the end-to-end process of building, training, tuning, and deploying machine learning models.

- SageMaker Neo enables machine learning models to train once and run anywhere in the cloud and at the edge.

- SageMaker API and SageMaker Runtime support VPC interface endpoints powered by AWS PrivateLink that helps connect VPC directly to the SageMaker API or SageMaker Runtime using AWS PrivateLink without using an internet gateway, NAT device, VPN connection, or AWS Direct Connect connection.

- Algorithms –

- Blazing text provides Word2vec and text classification algorithms

- DeepAR provides supervised learning algorithm for forecasting scalar (one-dimensional) time series (hint: train for new products based on existing products sales data).

- Factorization machines provide supervised classification and regression tasks, helps capture interactions between features within high dimensional sparse datasets economically.

- Image classification algorithm is a supervised learning algorithm that supports multi-label classification.

- IP Insights is an unsupervised learning algorithm that learns the usage patterns for IPv4 addresses.

- K-means is an unsupervised learning algorithm for clustering as it attempts to find discrete groupings within data, where members of a group are as similar as possible to one another and as different as possible from members of other groups.

- k-nearest neighbors (k-NN) algorithm is an index-based algorithm. It uses a non-parametric method for classification or regression.

- Latent Dirichlet Allocation (LDA) algorithm is an unsupervised learning algorithm that attempts to describe a set of observations as a mixture of distinct categories. Used to identify number of topics shared by documents within a text corpus

- Neural Topic Model (NTM) Algorithm is an unsupervised learning algorithm that is used to organize a corpus of documents into topics that contain word groupings based on their statistical distribution

- Linear models are supervised learning algorithms used for solving either classification or regression problems.

- For regression (predictor_type=’regressor’), the score is the prediction produced by the model.

- For classification (predictor_type=’binary_classifier’ or predictor_type=’multiclass_classifier’)

- Object Detection algorithm detects and classifies objects in images using a single deep neural network

- Principal Component Analysis (PCA) is an unsupervised machine learning algorithm that attempts to reduce the dimensionality (number of features) (hint: dimensionality reduction)

- Random Cut Forest (RCF) is an unsupervised algorithm for detecting anomalous data points (hint: anomaly detection)

- Sequence to Sequence is a supervised learning algorithm where the input is a sequence of tokens (for example, text, audio) and the output generated is another sequence of tokens. (hint: text summarization is the key use case)

- supports both File mode, Pipe mode, and Fast File mode

- SageMaker Ground Truth

- provides automated data labeling using machine learning

- helps build highly accurate training datasets for machine learning quickly using Amazon Mechanical Turk

- provides annotation consolidation to help improve the accuracy of the data object’s labels. It combines the results of multiple worker’s annotation tasks into one high-fidelity label.

- automated data labeling uses machine learning to label portions of the data automatically without having to send them to human workers

Machine Learning & AI Managed Services

- Comprehend

- natural language processing (NLP) service to find insights and relationships in text.

- identifies the language of the text; extracts key phrases, places, people, brands, or events; understands how positive or negative the text is; analyzes text using tokenization and parts of speech; and automatically organizes a collection of text files by topic.

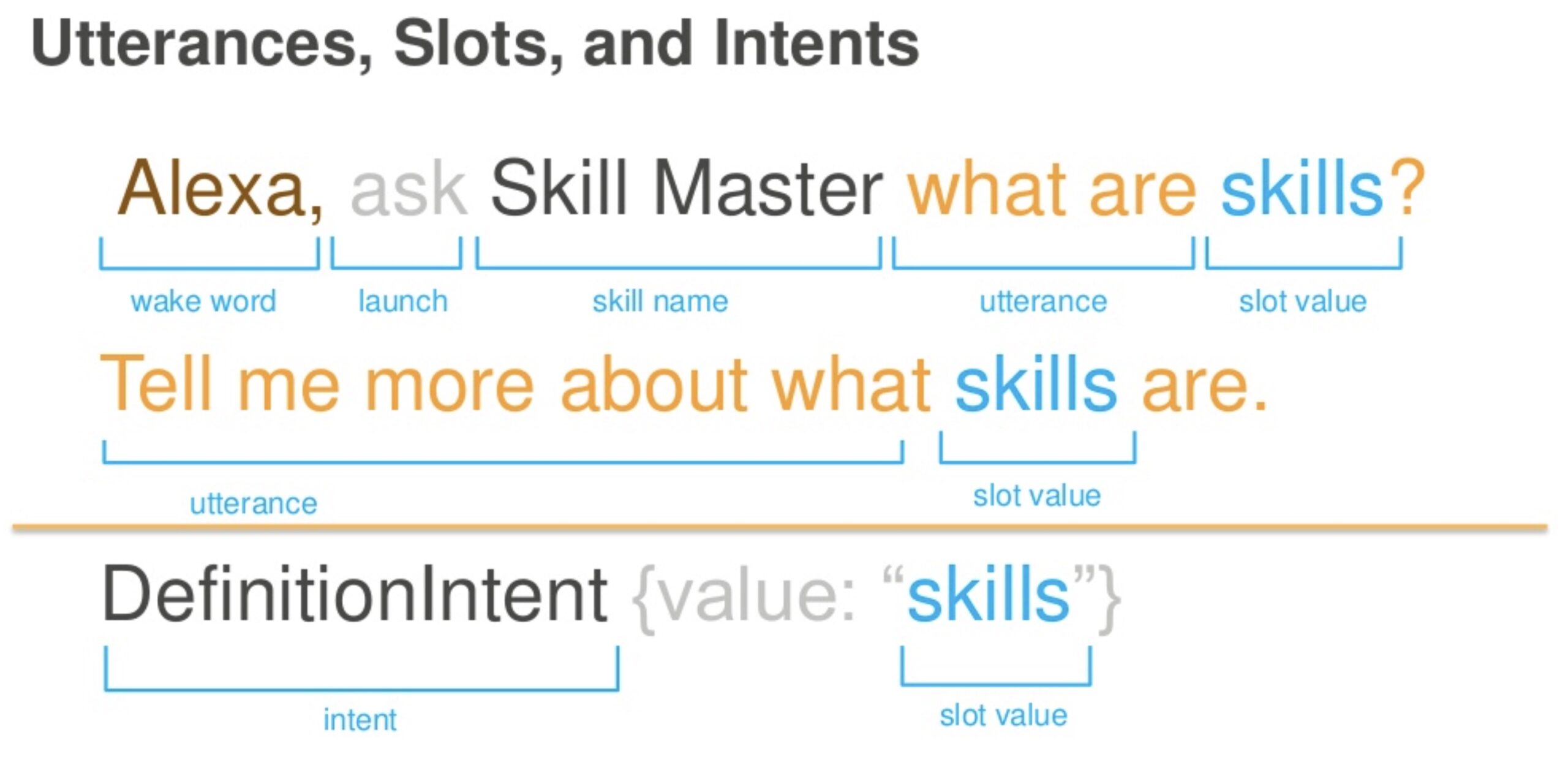

- Lex

- provides conversational interfaces using voice and text helpful in building voice and text chatbots

- Polly

- text into speech

- supports Speech Synthesis Markup Language (SSML) tags like prosody so users can adjust the speech rate, pitch or volume.

- supports pronunciation lexicons to customize the pronunciation of words

- Rekognition – analyze images and video

- helps identify objects, people, text, scenes, and activities in images and videos, as well as detect any inappropriate content.

- Translate – natural and fluent language translation

- Transcribe – automatic speech recognition (ASR) speech-to-text

- Kendra – an intelligent search service that uses NLP and advanced ML algorithms to return specific answers to search questions from your data.

- Panorama brings computer vision to the on-premises camera network.

- Augmented AI (Amazon A2I) is an ML service that makes it easy to build the workflows required for human review.

- Forecast – highly accurate forecasts.

Analytics

- Make sure you know and understand data engineering concepts mainly in terms of data capture, migration, transformation, and storage.

- Kinesis

- Understand Kinesis Data Streams and Kinesis Data Firehose in depth

- Kinesis Data Analytics can process and analyze streaming data using standard SQL and integrates with Data Streams and Firehose

- Know Kinesis Data Streams vs Kinesis Firehose

- Know Kinesis Data Streams is open ended on both producer and consumer. It supports KCL and works with Spark.

- Know Kinesis Firehose is open ended for producer only. Data is stored in S3, Redshift and ElasticSearch.

- Kinesis Firehose works in batches with minimum 60secs interval.

- Kinesis Data Firehose supports data transformation and record format conversion using Lambda function (hint: can be used for transforming csv or JSON into parquet)

- Kinesis Video Streams provides a fully managed service to ingest, index store, and stream live video. HLS can be used to view a Kinesis video stream, either for live playback or to view archived video.

- OpenSearch (ElasticSearch) is a search service that supports indexing, full-text search, faceting, etc.

- Data Pipeline helps define data-driven flows to automate and schedule regular data movement and data processing activities in AWS

- Glue is a fully managed, ETL (extract, transform, and load) service that automates the time-consuming steps of data preparation for analytics

- helps setup, orchestrate, and monitor complex data flows.

- Glue Data Catalog is a central repository to store structural and operational metadata for all the data assets.

- Glue crawler connects to a data store, extracts the schema of the data, and then populates the Glue Data Catalog with this metadata

- Glue DataBrew is a visual data preparation tool that enables users to clean and normalize data without writing any code.

- DataSync is an online data transfer service that simplifies, automates, and accelerates moving data between storage systems and services.

Security, Identity & Compliance

- Security is covered very lightly. (hint : SageMaker can read data from KMS-encrypted S3. Make sure, the KMS key policies include the role attached with SageMaker)

Management & Governance Tools

- Understand AWS CloudWatch for Logs and Metrics. (hint: SageMaker is integrated with Cloudwatch and logs and metrics are all stored in it)

Storage

- Understand Data Storage Options – Know patterns for S3 vs RDS vs DynamoDB vs Redshift. (hint: S3 is, by default, the data storage option or Big Data storage, and look for it in the answer.)