DynamoDB Auto Scaling

- DynamoDB Auto Scaling uses the AWS Application Auto Scaling service to dynamically adjust provisioned throughput capacity on your behalf, in response to actual traffic patterns.

- Application Auto Scaling enables a DynamoDB table or a global secondary index to increase its provisioned read and write capacity to handle sudden increases in traffic, without throttling.

- When the workload decreases, Application Auto Scaling decreases the throughput so that you don’t pay for unused provisioned capacity.

- Auto Scaling is available for both provisioned capacity mode and works alongside on-demand capacity mode.

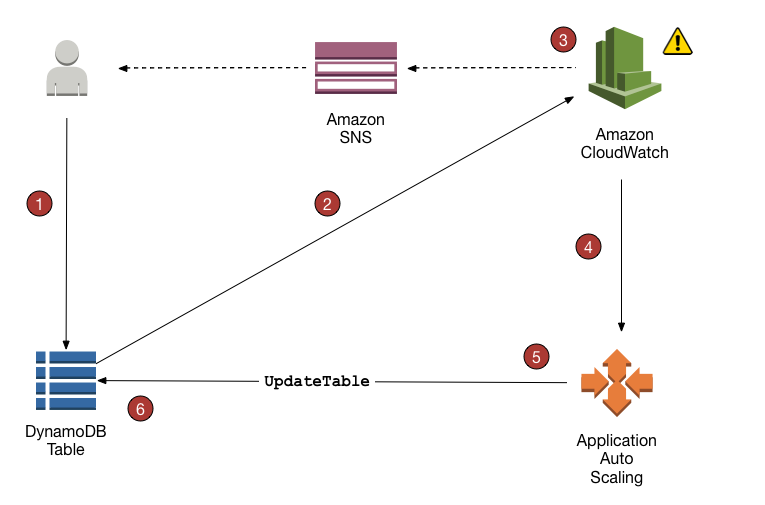

DynamoDB Auto Scaling Process

- Application Auto Scaling policy can be created on the DynamoDB table.

- DynamoDB publishes consumed capacity metrics to CloudWatch.

- If the table’s consumed capacity exceeds the target utilization (or falls below the target) for a specific length of time, CloudWatch triggers an alarm. You can view the alarm on the console and receive notifications using Simple Notification Service – SNS.

- The upper threshold alarm is triggered when consumed reads or writes breach the target utilization percent for two consecutive minutes.

- The lower threshold alarm is triggered after traffic falls below the target utilization minus 20 percent for 15 consecutive minutes.

- CloudWatch alarm invokes Application Auto Scaling to evaluate the scaling policy.

- Application Auto Scaling issues an

UpdateTablerequest to adjust the table’s provisioned throughput. - DynamoDB processes the

UpdateTablerequest, dynamically increasing (or decreasing) the table’s provisioned throughput capacity so that it approaches your target utilization.

Auto Scaling Configuration

- Target Utilization: The percentage of consumed provisioned throughput at a point in time (typically 70%).

- Minimum Capacity: The lower bound for provisioned throughput that Auto Scaling will not scale below.

- Maximum Capacity: The upper bound for provisioned throughput that Auto Scaling will not scale above.

- Scaling Policy: Defines how Auto Scaling responds to changes in workload.

- Auto Scaling can be configured for:

- Tables (read and write capacity)

- Global Secondary Indexes (read and write capacity)

- Each can be configured independently

Warm Throughput (November 2024)

- Announced in November 2024, DynamoDB now supports warm throughput for tables and indexes.

- Warm Throughput: The read and write capacity your DynamoDB table or index can immediately support, based on historical usage.

- Provides visibility into the number of read and write operations your table can readily handle.

- Automatic Growth: DynamoDB automatically adjusts warm throughput values as your usage increases.

- Pre-warming Capability: You can proactively set higher warm throughput values to prepare for anticipated traffic spikes.

- Useful for planned events like product launches, sales events, or marketing campaigns.

- Ensures your table is immediately ready to handle increased load from the moment the event begins.

- Prevents throttling during sudden traffic surges.

- Availability:

- Available for both provisioned and on-demand tables and indexes.

- Available in all AWS commercial Regions and AWS GovCloud (US) Regions.

- Pricing:

- Warm throughput values are available at no cost.

- Pre-warming your table’s throughput incurs a charge.

- Use Cases:

- Peak events with 10x or 100x traffic surges in short periods.

- Product launches or shopping events (e.g., Black Friday).

- Marketing campaigns with predictable traffic spikes.

- Gaming events or live streaming scenarios.

- How It Works:

- Each partition is limited to 1,000 write units per second and 3,000 read units per second.

- Warm throughput indicates the current capacity available across all partitions.

- Pre-warming increases this capacity before the traffic spike occurs.

- Auto Scaling takes time to react; pre-warming ensures immediate readiness.

Capacity Modes

Provisioned Capacity Mode with Auto Scaling

- You specify the number of read and write capacity units.

- Auto Scaling automatically adjusts capacity within configured min/max bounds.

- Best for predictable workloads with gradual changes.

- Cost-effective when you can forecast capacity needs.

- Supports warm throughput and pre-warming.

On-Demand Capacity Mode

- DynamoDB automatically scales to accommodate workload.

- No need to specify capacity units or configure Auto Scaling.

- Pay per request (no minimum capacity).

- Best for unpredictable workloads or new applications.

- Supports warm throughput and pre-warming.

- Pricing reduced by 50% effective November 1, 2024.

Auto Scaling Best Practices

- Set Appropriate Target Utilization: 70% is recommended to provide buffer for traffic spikes.

- Configure Realistic Min/Max Bounds: Ensure maximum capacity can handle peak loads.

- Use Warm Throughput for Planned Events: Pre-warm tables before anticipated traffic spikes.

- Monitor CloudWatch Metrics: Track consumed capacity, throttled requests, and Auto Scaling activities.

- Test Scaling Behavior: Simulate traffic patterns to validate Auto Scaling configuration.

- Consider On-Demand for Unpredictable Workloads: Eliminates need for capacity planning.

- Configure Alarms: Set up CloudWatch alarms for throttling events and capacity changes.

- Review Scaling History: Analyze past scaling activities to optimize configuration.

- Account for Partition Limits: Remember individual partition limits (1,000 WCU, 3,000 RCU).

Auto Scaling Limitations

- Auto Scaling takes time to react to traffic changes (not instantaneous).

- Scale-up is faster than scale-down (scale-down has 15-minute cooldown).

- Individual partitions have throughput limits (1,000 WCU, 3,000 RCU per partition).

- Hot partitions can cause throttling even with sufficient overall capacity.

- Auto Scaling cannot prevent throttling during sudden, extreme traffic spikes (use pre-warming).

- For Global Tables, Auto Scaling settings are synchronized across replicas.

Monitoring Auto Scaling

- CloudWatch Metrics:

ConsumedReadCapacityUnits/ConsumedWriteCapacityUnitsProvisionedReadCapacityUnits/ProvisionedWriteCapacityUnitsReadThrottleEvents/WriteThrottleEventsUserErrors(includes throttling errors)

- Auto Scaling Activity: View scaling activities in Application Auto Scaling console.

- Warm Throughput Values: Monitor current warm throughput via DynamoDB console or APIs.

- Alarms: Configure CloudWatch alarms for proactive monitoring.

AWS Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- AWS services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- AWS exam questions are not updated to keep up the pace with AWS updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- An application running on Amazon EC2 instances writes data synchronously to an Amazon DynamoDB table configured for 60 write capacity units. During normal operation, the application writes 50KB/s to the table but can scale up to 500 KB/s during peak hours. The application is currently getting throttling errors from the DynamoDB table during peak hours. What is the MOST cost-effective change to support the increased traffic with minimal changes to the application?

- Use Amazon SNS to manage the write operations to the DynamoDB table

- Change DynamoDB table configuration to 600 write capacity units

- Increase the number of Amazon EC2 instances to support the traffic

- Configure Amazon DynamoDB Auto Scaling to handle the extra demand

- A company is planning a major product launch that will cause a 100x traffic spike to their DynamoDB table for 2 hours. They want to ensure the table can handle the load immediately without throttling. What should they do?

- Configure Auto Scaling with a high maximum capacity.

- Switch to on-demand capacity mode.

- Pre-warm the table using warm throughput before the launch.

- Manually increase provisioned capacity before the launch.

- A DynamoDB table with Auto Scaling configured is experiencing throttling despite having sufficient overall capacity. What is the MOST likely cause?

- Auto Scaling is not configured correctly.

- The target utilization is set too high.

- Hot partitions are exceeding per-partition throughput limits.

- CloudWatch alarms are not triggering properly.

- What is the recommended target utilization percentage for DynamoDB Auto Scaling?

- 50%

- 70%

- 90%

- 100%

- A company wants to minimize costs for a DynamoDB table with unpredictable traffic patterns. Which capacity mode should they choose?

- Provisioned capacity with Auto Scaling

- On-demand capacity mode

- Provisioned capacity with manual scaling

- Reserved capacity

- Which of the following statements about DynamoDB warm throughput are correct? (Select TWO)

- Warm throughput values are available at no cost.

- Warm throughput is only available for provisioned capacity mode.

- Pre-warming a table incurs a charge.

- Warm throughput cannot be used with on-demand capacity mode.

- Warm throughput eliminates the need for Auto Scaling.

One thought on “Amazon DynamoDB Auto Scaling”

Comments are closed.