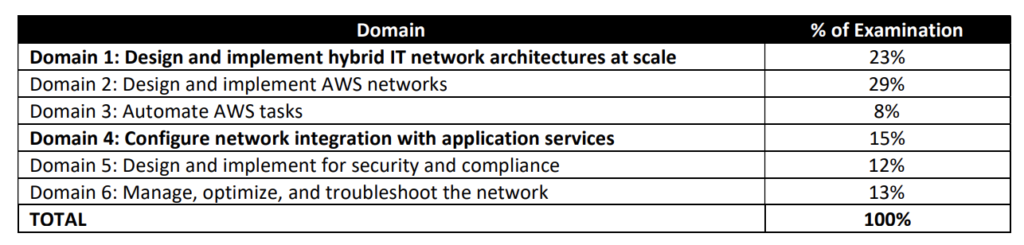

Google Cloud – Professional Cloud Network Engineer Certification learning path

Google Cloud – Professional Cloud Network Engineer certification exam focuses on almost all of the Google Cloud network services.

Google Cloud -Professional Cloud Network Engineer Certification Summary

- Has 50 questions to be answered in 2 hours.

- Covers a wide range of Google Cloud services mainly focusing on network services

- Hands-on is a MUST, if you have not worked on GCP before make sure you do lots of labs else you would be absolutely clueless for some of the questions and commands

- I did Coursera and ACloud Guru which is really vast, but hands-on or practical knowledge is MUST.

Google Cloud – Professional Cloud Network Engineer Certification Resources

- Courses

- Coursera – Google Cloud Networking Professional Certificate

- Coursera – Networking in Google Cloud

which includes

- Coursera – Hands-On Labs in Google Cloud for Networking Engineers

- A Cloud Guru – Google Cloud Certified – Professional Cloud Architect

- Coursera – Google Cloud Networking Professional Certificate

- Practice tests

- Use Google Free Tier and Qwiklabs as much as possible.

Google Cloud – Professional Cloud Network Engineer Certification Topics

Network Services

- Refer Google Cloud Networking Services Cheat Sheet

- Virtual Private Cloud

- Understand Virtual Private Cloud (VPC), subnets, and host applications within them

- VPC Routes determine the next hop for the traffic. HINT: It can be defined for specific tags as well. More specific takes priority.

- Firewall rules control the Traffic to and from instances. HINT: rules with lower integers indicate higher priorities. Firewall rules can be applied to specific tags.

- VPC Peering allows internal or private IP address connectivity across two VPC networks regardless of whether they belong to the same project or the same organization. HINT: VPC Peering uses private IPs and does not support transitive peering

- Shared VPC allows an organization to connect resources from multiple projects to a common VPC network so that they can communicate with each other securely and efficiently using internal IPs from that network HINT: VLAN attachments and Cloud Routers for Interconnect must be created in the host project

- Understand the concept internal and external IPs and the difference between static and ephemeral IPs

- VPC Subnets support primary and secondary (alias) IP range

- Primary IP range of an existing subnet can be expanded by modifying its subnet mask, setting the prefix length to a smaller number.

- Private Access options for services allow instances with internal IP addresses can communicate with Google APIs and services.

- Private Google Access allows VMs to connect to the set of external IP addresses used by Google APIs and services by enabling Private Google Access on the subnet used by the VM’s network interface. HINT: Private Google Access is enabled on the subnet and not on the VPC level

- VPC Flow Logs records a sample of network flows sent from and received by VM instances, including instances used as GKE nodes.

- Firewall Rules Logging enables auditing, verifying, and analyzing the effects of the firewall rules HINT: Default implicit ingress deny rule is not captured by firewall rules logging. Add an explicit deny rule

- Resources within a VPC network can communicate with one another by using internal IPv4 addresses

- Hybrid Connectivity

- Understand Hybrid Connectivity options

- Cloud VPN

- Cloud VPN provides secure connectivity from the on-premises data center to the GCP network through the public internet. Cloud VPN does not provide internal or private IP connectivity

- Understand what are the requirements to setup Cloud VPN.

- Cloud VPN is quick to setup and test hybrid connectivity

- Understand limitations of Cloud VPN esp. 3Gbps limit. How it can be improved with multiple tunnels.

- Cloud VPN requires non overlapping primary and secondary IPs address between on-premises and GCP VPC networks

- Cloud VPN HA provides a highly available and secure connection between the on-premises and the VPC network through an IPsec VPN connection in a single region

- Cloud Interconnect

- Cloud Interconnect provides direct connectivity from the on-premises data center to GCP network

- Dedicated Interconnect provides a direct physical connection between the on-premises network and Google’s network. Supports > 10Gbps

- Partner Interconnect provides connectivity between the on-premises and VPC networks through a supported service provider. Supports 50Mbps to 10 Gbps

- Understand Dedicated Interconnect vs Partner Interconnect and when to choose

- Know Interconnect as the reliable high speed, low latency, and dedicated bandwidth option.

- Cloud Monitoring monitors interconnect links. Circuit Operational Status metric threshold tracks the circuits while Interconnect Operational Status metric tracks all the links

- Cloud Router

- Cloud Router provides dynamic routing using BGP with HA VPN and Cloud Interconnect

- Cloud Router Global routing mode provides visibility to resources in all regions

- Cloud Router uses Multi-exit Discriminator (MED) value to route traffic. The same MED value results in Active/Active connection and different MED results in Active/Passive connection

- Cloud NAT

- Cloud NAT allows VM instances without external IP addresses and private GKE clusters to send outbound packets to the internet and receive any corresponding established inbound response packets.

- Requests would not be routed through Cloud NAT if they have an external IP address

- Cloud Peering

- Google Cloud Peering provides Direct Peering and Carrier Peering

- Peering provides a direct path from the on-premises network to Google services, including Google Cloud products that can be exposed through one or more public IP addresses does not provide a private dedicated connection

- Cloud Load Balancing

- Google Cloud Load Balancing provides scaling, high availability, and traffic management for your internet-facing and private applications.

- Understand Google Load Balancing options and their use cases esp. which is global and internal and what protocols they support.

- Network Load Balancer – regional, external, pass through and supports TCP/UDP

- Internal TCP/UDP Load Balancer – regional, internal, pass through and supports TCP/UDP

- HTTP/S Load Balancer – regional/global, external, pass through and supports HTTP/S

- Internal HTTP/S Load Balancer – regional/global, internal, pass through and supports HTTP/S

- SSL Proxy Load Balancer – regional/global, external, proxy, supports SSL with SSL offload capability

- TCP Proxy Load Balancer – regional/global, external, proxy, supports TCP without SSL offload capability

- Cloud Load Balancing supports health checks with managed instance groups

- Cloud CDN

- Understand Cloud CDN as the global content delivery network

- Know CDN works only for global external HTTP/S Load Balancer

- Cache is not removed if the underlying origin data is removed. Cache has to be invalidated explicitly, or is removed once expired.

- Cloud CDN does not compress but serves response from the origin as is. HINT: As LB adds Via header some web server do not compress response and must be configured to ignore the Via header

- Cloud DNS

- Understand Cloud DNS and its features

- supports migration or importing of records from on-premises using JSON/YAML format

- supports DNSSEC, a feature of DNS, that authenticates responses to domain name lookups and protects the domains from spoofing and cache poisoning attacks

Identity Services

- Cloud Identity and Access Management

- Identify and Access Management – IAM provides administrators the ability to manage cloud resources centrally by controlling who can take what action on specific resources.

- Compute Network Admin does not provide access to SSL certificates and firewall rules. Need to assign Security Admin role

Compute Services

- Compute services like Google Compute Engine and Google Kubernetes Engine are lightly covered more from the networking aspects

- Google Compute Engine

- Google Compute Engine is the best IaaS option for compute and provides fine grained control

- Difference between managed vs unmanaged instance groups and auto-healing feature

- Regional Managed Instance group helps spread load across instances in multiple zones within the same region providing scalability and HA

- Managed Instance group helps perform canary and rolling updates

- Managed Instance group autoscaling can be configured on CPU or load balancer metrics or custom metrics.

- Managing access using OS Login or project and instance metadata

- Google Kubernetes Engine

- Google Kubernetes Engine, powered by the open-source container scheduler Kubernetes, enables you to run containers on Google Cloud Platform.

- Understand GKE Networking in detailed

- Understand GKE Cluster types based on networking i.e. Route Based and VPC-Native cluster

- Understand GKE VPC-Native cluster IP Allocation

- Private clusters help isolate nodes from having inbound and outbound connectivity to the public internet by providing nodes with internal IP addresses only.

Security Services

- Cloud Armor

- Cloud Armor protects the applications from multiple types of threats, including DDoS attacks and application attacks like XSS and SQLi

- with GKE needs to be configured with GKE Ingress

- can be used to blacklist IP

- supports preview mode to understand patterns without blocking the users

All the Best !!