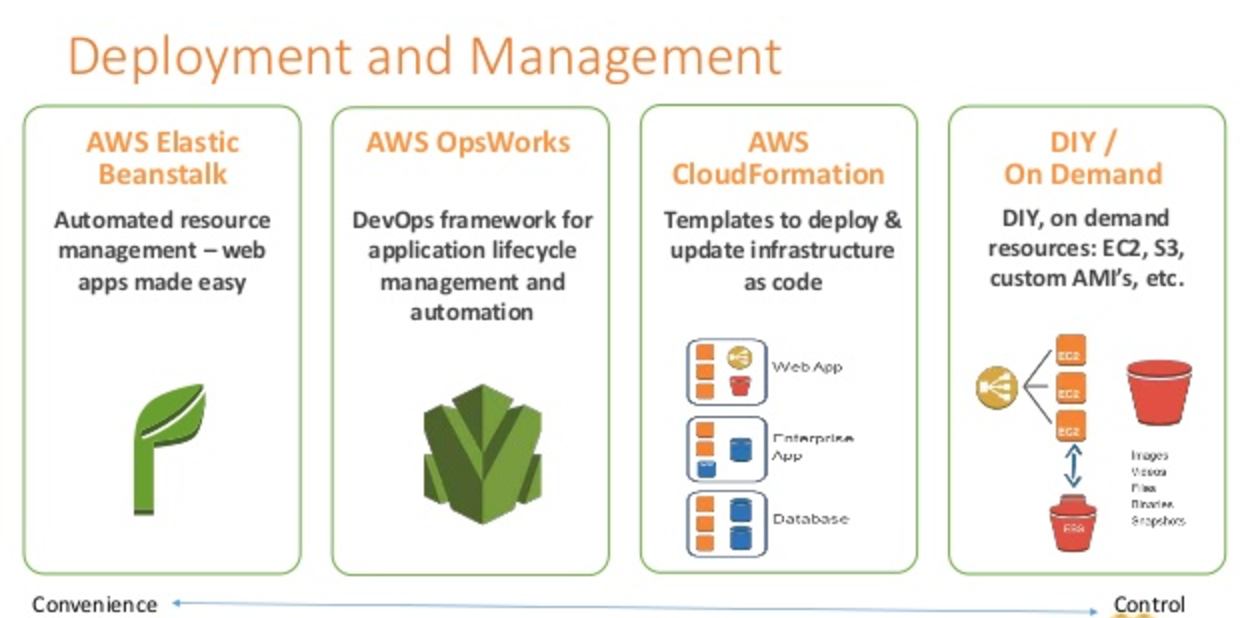

AWS Elastic Beanstalk vs OpsWorks vs CloudFormation

AWS offers multiple options for provisioning IT infrastructure and application deployment and management varying from convenience & easy of setup with low level granular control

AWS Elastic Beanstalk

- AWS Elastic Beanstalk is a higher level service which allows you to quickly deploy out with minimum management effort a web or worker based environments using EC2, Docker using ECS, Elastic Load Balancing, Auto Scaling, RDS, CloudWatch etc.

- Elastic Beanstalk is the fastest and simplest way to get an application up and running on AWS and perfect for developers who want to deploy code and not worry about underlying infrastructure

- Elastic Beanstalk provides an environment to easily deploy and run applications in the cloud. It is integrated with developer tools and provides a one-stop experience for application lifecycle management

- Elastic Beanstalk requires minimal configuration points and will help deploy, monitor and handle the elasticity/scalability of the application

- A user does’t need to do much more than write application code and configure and define some configuration on Elastic Beanstalk

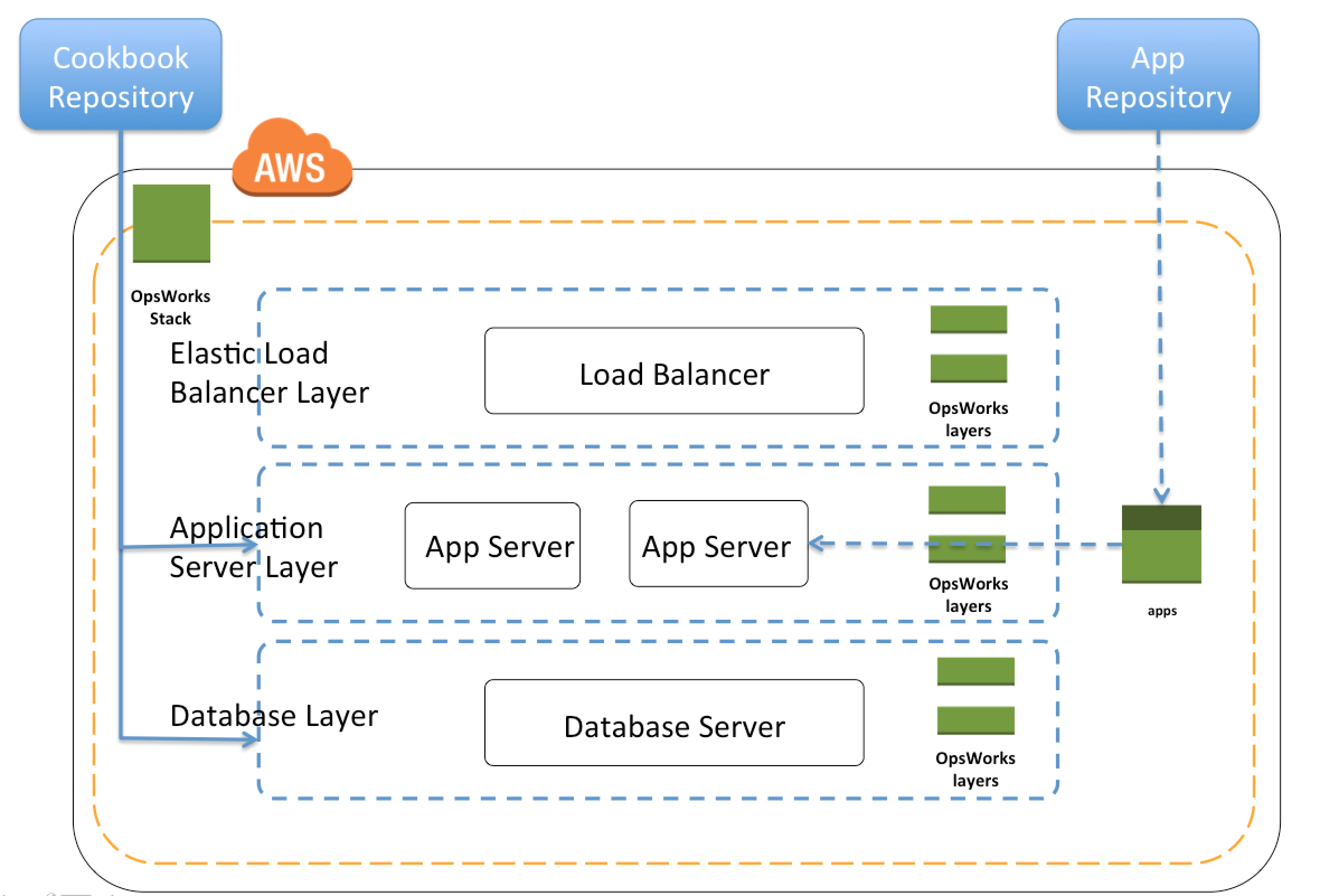

AWS OpsWorks

- AWS OpsWorks is an application management service that simplifies software configuration, application deployment, scaling, and monitoring

- OpsWorks is recommended if you want to manage your infrastructure with a configuration management system such as Chef.

- Opsworks enables writing custom chef recipes, utilizes self healing, and works with layers

- Although, Opsworks is deployment management service that helps you deploy applications with Chef recipes, but it is not primally meant to manage the scaling of the application out of the box, and needs to be handled explicitly

AWS CloudFormation

- AWS CloudFormation enables modeling, provisioning and version-controlling of a wide range of AWS resources ranging from a single EC2 instance to a complex multi-tier, multi-region application

- CloudFormation is a low level service and provides granular control to provision and manage stacks of AWS resources based on templates

- CloudFormation templates enables version control of the infrastructure and makes deployment of environments easy and repeatable

- CloudFormation supports infrastructure needs of many different types of applications such as existing enterprise applications, legacy applications, applications built using a variety of AWS resources and container-based solutions (including those built using AWS Elastic Beanstalk).

- CloudFormation is not just an application deployment tool but can provision any kind of AWS resource

- CloudFormation is designed to complement both Elastic Beanstalk and OpsWorks

- CloudFormation with Elastic Beanstalk

- CloudFormation supports Elastic Beanstalk application environments as one of the AWS resource types.

- This allows you, for example, to create and manage an AWS Elastic Beanstalk–hosted application along with an RDS database to store the application data. In addition to RDS instances, any other supported AWS resource can be added to the group as well.

- CloudFormation with OpsWorks

- CloudFormation also supports OpsWorks and OpsWorks components (stacks, layers, instances, and applications) can be modeled inside CloudFormation templates, and provisioned as CloudFormation stacks.

- This enables you to document, version control, and share your OpsWorks configuration.

- Unified CloudFormation template or separate CloudFormation templates can be created to provision OpsWorks components and other related AWS resources such as VPC and Elastic Load Balancer

AWS Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- AWS services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- AWS exam questions are not updated to keep up the pace with AWS updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- Your team is excited about the use of AWS because now they have access to programmable infrastructure. You have been asked to manage your AWS infrastructure in a manner similar to the way you might manage application code. You want to be able to deploy exact copies of different versions of your infrastructure, stage changes into different environments, revert back to previous versions, and identify what versions are running at any particular time (development test QA. production). Which approach addresses this requirement?

- Use cost allocation reports and AWS Opsworks to deploy and manage your infrastructure.

- Use AWS CloudWatch metrics and alerts along with resource tagging to deploy and manage your infrastructure.

- Use AWS Elastic Beanstalk and a version control system like GIT to deploy and manage your infrastructure.

- Use AWS CloudFormation and a version control system like GIT to deploy and manage your infrastructure.

- An organization is planning to use AWS for their production roll out. The organization wants to implement automation for deployment such that it will automatically create a LAMP stack, download the latest PHP installable from S3 and setup the ELB. Which of the below mentioned AWS services meets the requirement for making an orderly deployment of the software?

- AWS Elastic Beanstalk

- AWS CloudFront

- AWS CloudFormation

- AWS DevOps

- You are working with a customer who is using Chef configuration management in their data center. Which service is designed to let the customer leverage existing Chef recipes in AWS?

- Amazon Simple Workflow Service

- AWS Elastic Beanstalk

- AWS CloudFormation

- AWS OpsWorks