AWS EBS Performance Tips

- EBS Performance depends on several factors including I/O characteristics, instances and volumes configuration and can be improved using PIOPS, EBS-Optimized instances, Pre-Warming, and RAIDed configuration.

EBS-Optimized or 10 Gigabit Network Instances

- An EBS-Optimized instance uses an optimized configuration stack and provides additional, dedicated capacity for EBS I/O.

- Optimization provides the best performance for the EBS volumes by minimizing contention between EBS I/O and other traffic from an instance

- EBS-Optimized instances deliver dedicated throughput to EBS depending on the instance type used.

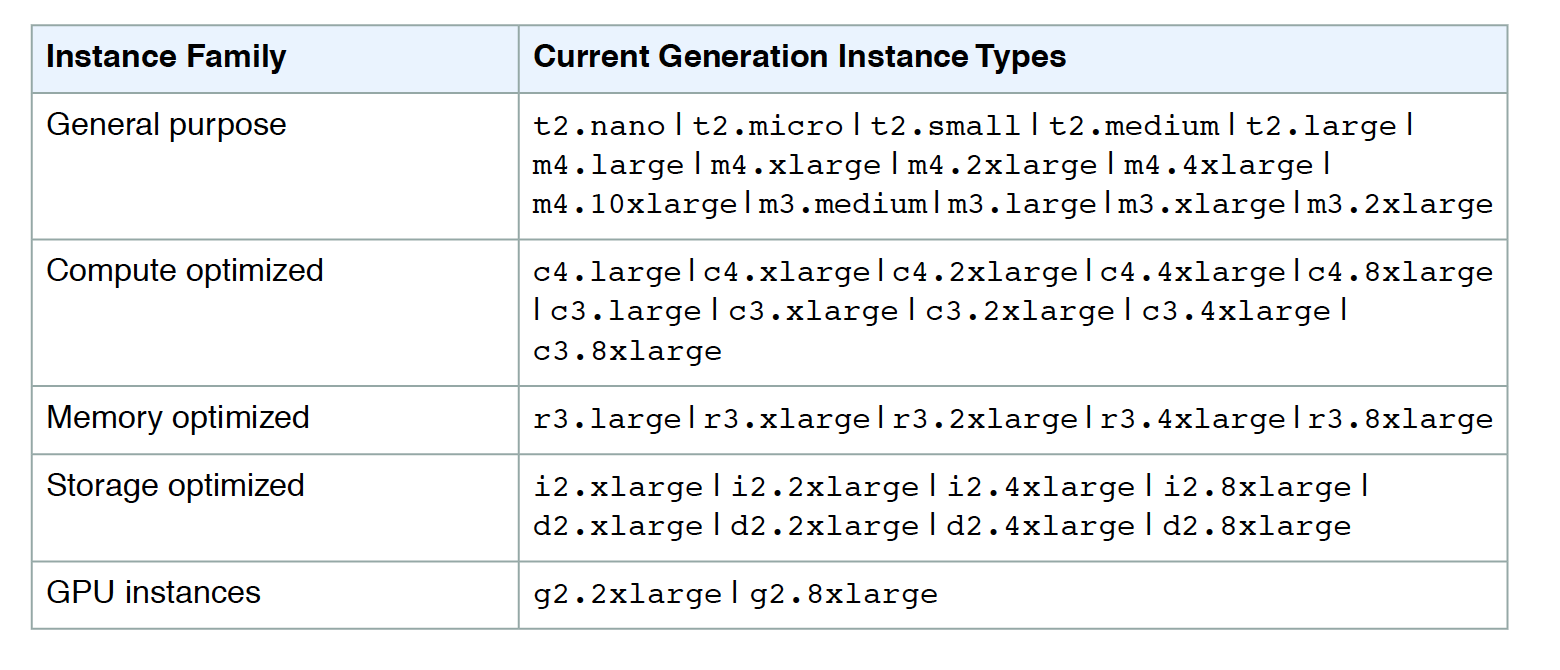

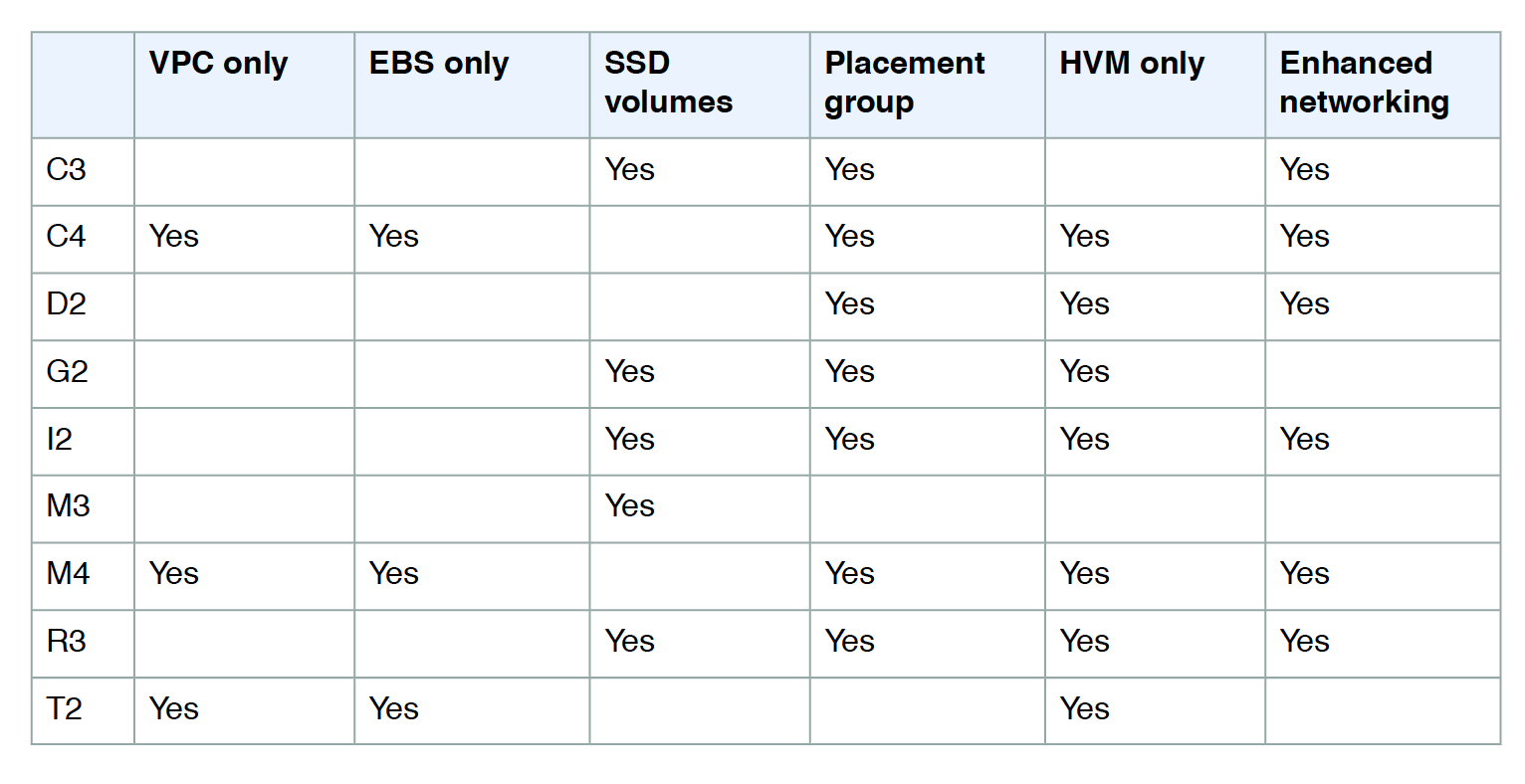

- Not all instance types support EBS-Optimization

- Some Instance types enable EBS-Optimization by default, while it can be enabled for some.

- EBS optimization enabled for an instance, that is not EBS-Optimized by default, an additional low, hourly fee for the dedicated capacity is charged

- When attached to an EBS–optimized instance,

- General Purpose (SSD) volumes are designed to deliver within 10% of their baseline and burst performance 99.9% of the time in a given year

- Provisioned IOPS (SSD) volumes are designed to deliver within 10% of their provisioned performance 99.9 percent of the time in a given year.

EBS Volume Initialization – Pre-warming

- Empty EBS volumes receive their maximum performance the moment that they are available and DO NOT require initialization (pre-warming).

- EBS volumes needed a pre-warming, previously, before being used to get maximum performance to start with. Pre-warming of the volume was possible by writing to the entire volume with 0 for new volumes or reading the entire volume for volumes from snapshots.

- Storage blocks on volumes that were restored from snapshots must be initialized (pulled down from S3 and written to the volume) before the block can be accessed.

- This preliminary action takes time and can cause a significant increase in the latency of an I/O operation the first time each block is accessed.

- To avoid this initial performance hit in a production environment, the following options can be used

- Force the immediate initialization of the entire volume by using the

ddorfioutilities to read from all of the blocks on a volume. - Enable fast snapshot restore – FSR on a snapshot to ensure that the EBS volumes created from it are fully-initialized at creation and instantly deliver all of their provisioned performance.

- Force the immediate initialization of the entire volume by using the

RAID Configuration

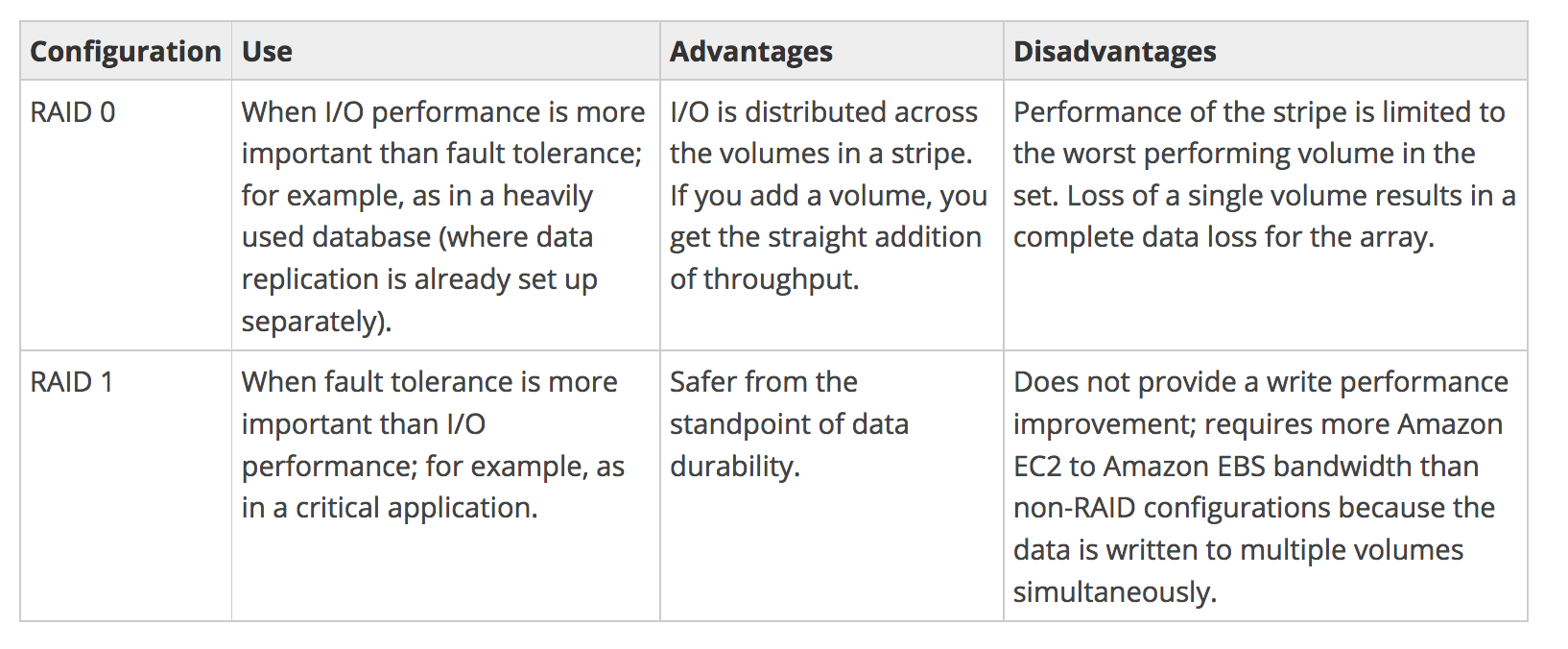

- EBS volumes can be striped, if a single EBS volume does not meet the performance and more is required.

- Striping volumes allows pushing tens of thousands of IOPS.

- EBS volumes are already replicated across multiple servers in an AZ for availability and durability, so AWS generally recommend striping for performance rather than durability.

- For greater I/O performance than can be achieved with a single volume, RAID 0 can stripe multiple volumes together; for on-instance redundancy, RAID 1 can mirror two volumes together.

- RAID 0 allows I/O distribution across all volumes in a stripe, allowing straight gains with each addition.

- RAID 1 can be used for durability to mirror volumes, but in this case, it requires more EC2 to EBS bandwidth as the data is written to multiple volumes simultaneously and should be used with EBS–optimization.

- EBS volume data is replicated across multiple servers in an AZ to prevent the loss of data from the failure of any single component

- AWS doesn’t recommend RAID 5 and 6 because the parity write operations of these modes consume the IOPS available for the volumes and can result in 20-30% fewer usable IOPS than RAID 0.

- A 2-volume RAID 0 config can outperform a 4-volume RAID 6 that costs twice as much.

AWS Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- AWS services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- AWS exam questions are not updated to keep up the pace with AWS updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- A user is trying to pre-warm a blank EBS volume attached to a Linux instance. Which of the below mentioned steps should be performed by the user?

- There is no need to pre-warm an EBS volume (with latest update no pre-warming is needed)

- Contact AWS support to pre-warm (This used to be the case before, but pre warming is not necessary now)

- Unmount the volume before pre-warming

- Format the device

- A user has created an EBS volume of 10 GB and attached it to a running instance. The user is trying to access EBS for first time. Which of the below mentioned options is the correct statement with respect to a first time EBS access?

- The volume will show a size of 8 GB

- The volume will show a loss of the IOPS performance the first time (the volume needed to be wiped cleaned before for new volumes, however pre warming is not needed any more)

- The volume will be blank

- If the EBS is mounted it will ask the user to create a file system

- You are running a database on an EC2 instance, with the data stored on Elastic Block Store (EBS) for persistence At times throughout the day, you are seeing large variance in the response times of the database queries Looking into the instance with the isolate command you see a lot of wait time on the disk volume that the database’s data is stored on. What two ways can you improve the performance of the database’s storage while maintaining the current persistence of the data? Choose 2 answers

- Move to an SSD backed instance

- Move the database to an EBS-Optimized Instance

- Use Provisioned IOPs EBS

- Use the ephemeral storage on an m2.4xLarge Instance Instead

- You have launched an EC2 instance with four (4) 500 GB EBS Provisioned IOPS volumes attached. The EC2 Instance is EBS-Optimized and supports 500 Mbps throughput between EC2 and EBS. The two EBS volumes are configured as a single RAID 0 device, and each Provisioned IOPS volume is provisioned with 4,000 IOPS (4000 16KB reads or writes) for a total of 16,000 random IOPS on the instance. The EC2 Instance initially delivers the expected 16,000 IOPS random read and write performance. Sometime later in order to increase the total random I/O performance of the instance, you add an additional two 500 GB EBS Provisioned IOPS volumes to the RAID. Each volume is provisioned to 4,000 IOPS like the original four for a total of 24,000 IOPS on the EC2 instance Monitoring shows that the EC2 instance CPU utilization increased from 50% to 70%, but the total random IOPS measured at the instance level does not increase at all. What is the problem and a valid solution?

- Larger storage volumes support higher Provisioned IOPS rates: increase the provisioned volume storage of each of the 6 EBS volumes to 1TB.

- EBS-Optimized throughput limits the total IOPS that can be utilized use an EBS-Optimized instance that provides larger throughput. (EC2 Instance types have limit on max throughput and would require larger instance types to provide 24000 IOPS)

- Small block sizes cause performance degradation, limiting the I’O throughput, configure the instance device driver and file system to use 64KB blocks to increase throughput.

- RAID 0 only scales linearly to about 4 devices, use RAID 0 with 4 EBS Provisioned IOPS volumes but increase each Provisioned IOPS EBS volume to 6.000 IOPS.

- The standard EBS instance root volume limits the total IOPS rate, change the instant root volume to also be a 500GB 4,000 Provisioned IOPS volume

- A user has deployed an application on an EBS backed EC2 instance. For a better performance of application, it requires dedicated EC2 to EBS traffic. How can the user achieve this?

- Launch the EC2 instance as EBS provisioned with PIOPS EBS

- Launch the EC2 instance as EBS enhanced with PIOPS EBS

- Launch the EC2 instance as EBS dedicated with PIOPS EBS

- Launch the EC2 instance as EBS optimized with PIOPS EBS