Google Cloud Dataflow vs Dataproc

Cloud Dataproc

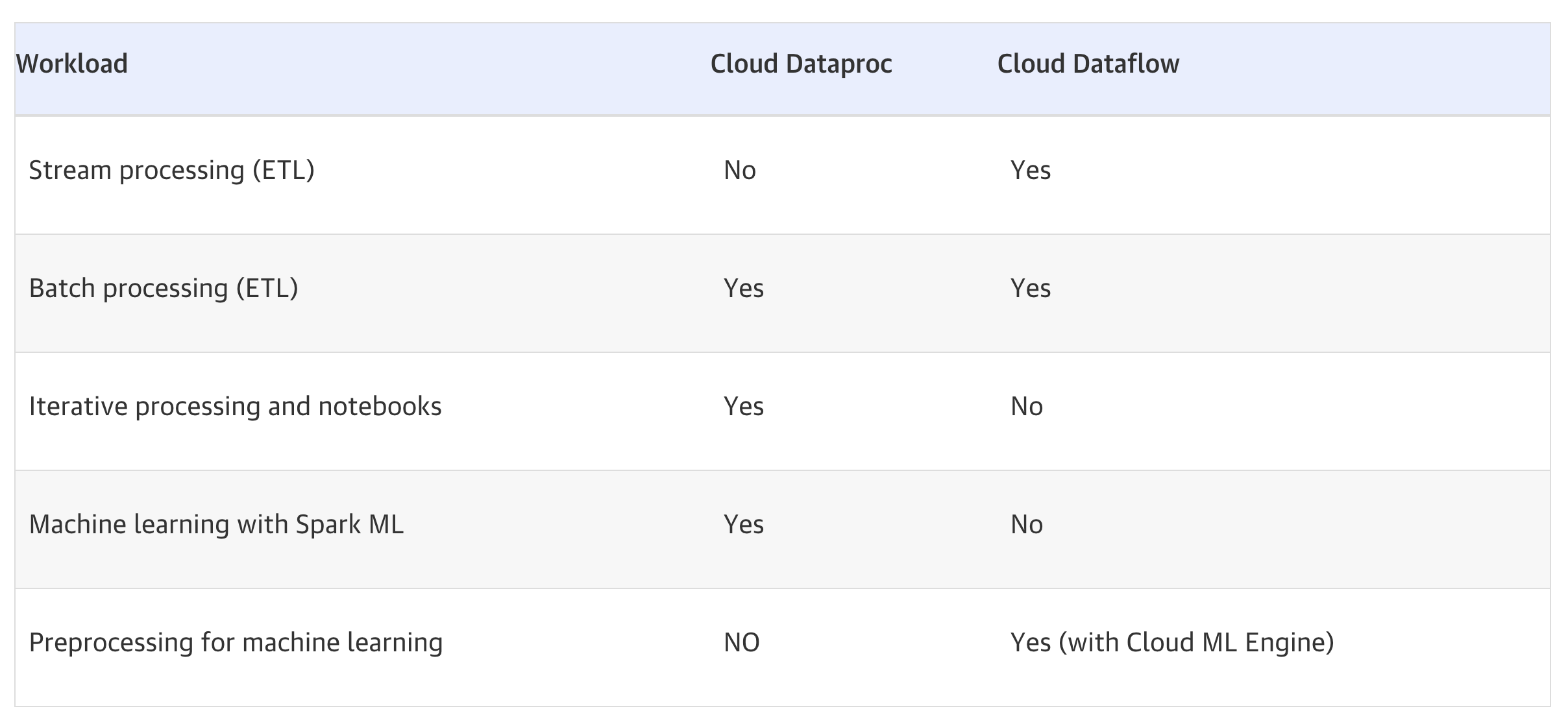

- Cloud Dataproc is a managed Spark and Hadoop service that lets you take advantage of open-source data tools for batch processing, querying, streaming, and machine learning.

- Cloud Dataproc provides a Hadoop cluster, on GCP, and access to Hadoop-ecosystem tools (e.g. Apache Pig, Hive, and Spark); this has strong appeal if already familiar with Hadoop tools and have Hadoop jobs

- Ideal for Lift and Shift migration of existing Hadoop environment

- Requires manual provisioning of clusters

- Consider Dataproc

- If you have a substantial investment in Apache Spark or Hadoop on-premise and considering moving to the cloud

- If you are looking at a Hybrid cloud and need portability across a private/multi-cloud environment

- If in the current environment Spark is the primary machine learning tool and platform

- In case the code depends on any custom packages along with distributed computing need

Cloud Dataflow

- Google Cloud Dataflow is a fully managed, serverless service for unified stream and batch data processing requirements

- When using it as a pre-processing pipeline for ML model that can be deployed in GCP AI Platform Training (earlier called Cloud ML Engine)

- None of the above considerations made for Cloud Dataproc is relevant

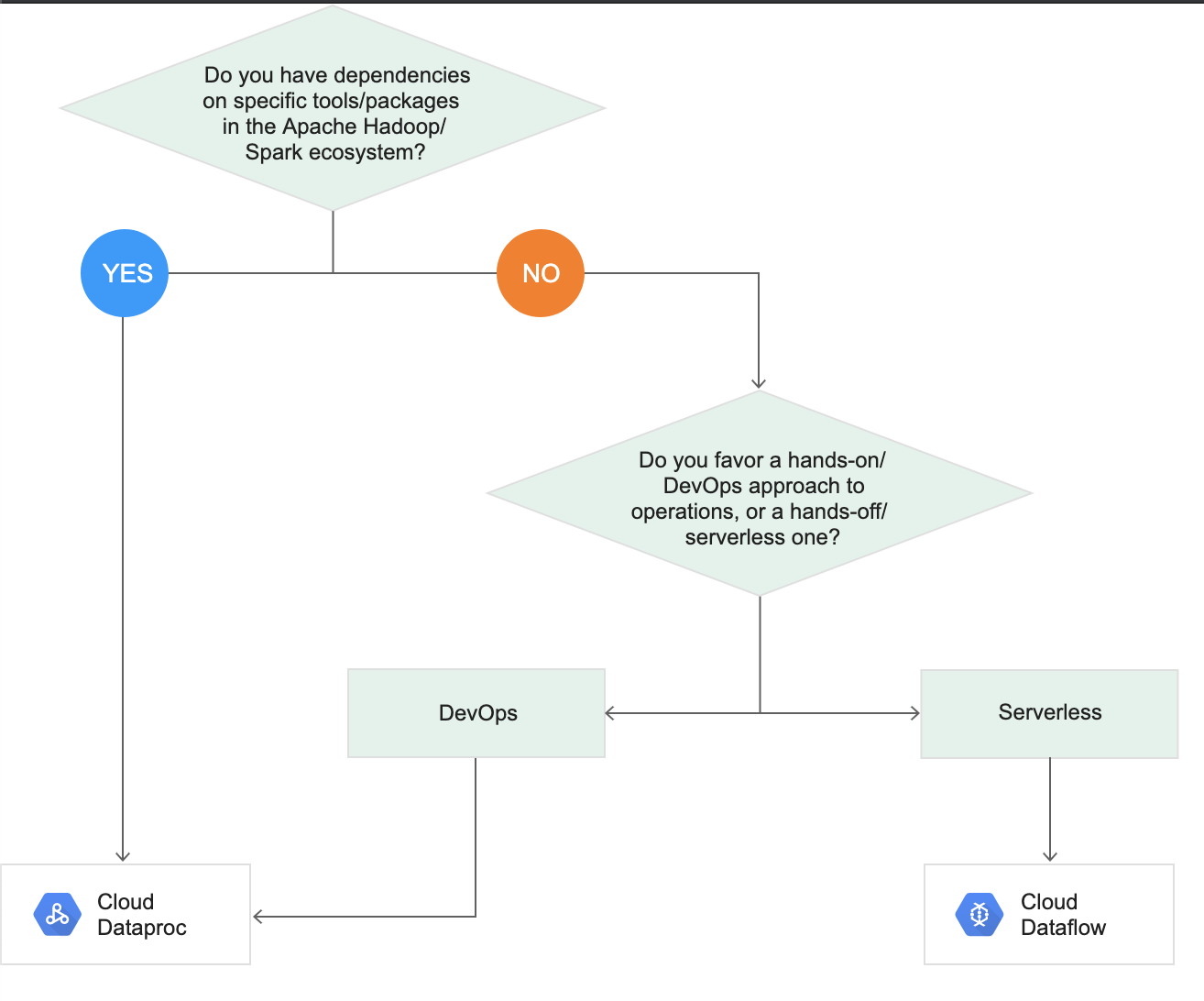

Cloud Dataflow vs Dataproc Decision Tree

GCP Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- GCP services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- GCP exam questions are not updated to keep up the pace with GCP updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- Your company is forecasting a sharp increase in the number and size of Apache Spark and Hadoop jobs being run on your local data center. You want to utilize the cloud to help you scale this upcoming demand with the least amount of operations work and code change. Which product should you use?

- Google Cloud Dataflow

- Google Cloud Dataproc

- Google Compute Engine

- Google Container Engine

- A startup plans to use a data processing platform, which supports both batch and streaming applications. They would prefer to have a hands-off/serverless data processing platform to start with. Which GCP service is suited for them?

- Dataproc

- Dataprep

- Dataflow

- BigQuery

Thanks for sharing a good information