Google Cloud Spanner

- Cloud Spanner is a fully managed, mission-critical relational database service

- Cloud Spanner provides a scalable online transaction processing (OLTP) database with high availability and strong consistency at a global scale.

- Cloud Spanner provides traditional relational semantics like schemas, ACID transactions and SQL interface

- Cloud Spanner provides Automatic, Synchronous replication within and across regions for high availability (99.999%)

- Cloud Spanner benefits

- OLTP (Online Transactional Processing)

- Global scale

- Relational data model

- ACID/Strong or External consistency

- Low latency

- Fully managed and highly available

- Automatic replication

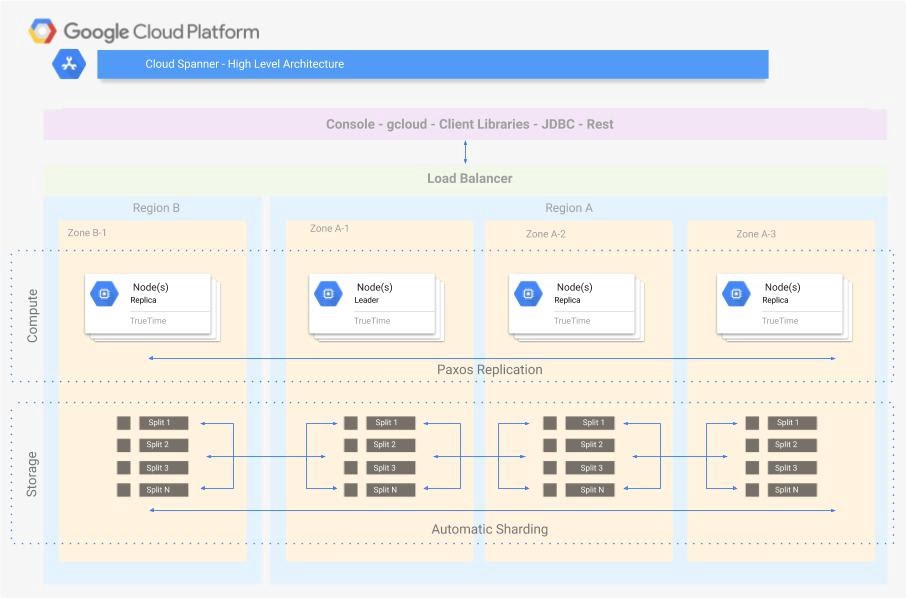

Cloud Spanner Architecture

Instance

Instance

- Cloud Spanner Instance determines the location and the allocation of resources

- Instance creation includes two important choices

- Instance configuration

- determines the geographic placement i.e. location and replication of the databases

- Location can be regional or multi-regional

- cannot be changed once selected during the creation

- Node count

- determines the amount of the instance’s serving and storage resources

- can be updated

- Instance configuration

- Cloud Spanner distributes an instance across zones of one or more regions to provide high performance and high availability

- Cloud Spanner instances have:

- At least three read-write replicas of the database each in a different zone

- Each zone is a separate isolation fault domain

- Paxos distributed consensus protocol used for writes/transaction commits

- Synchronous replication of writes to all zones across all regions

- Database is available even if one zone fails (99.999% availability SLA for multi-region and 99.99% availability SLA for regional)

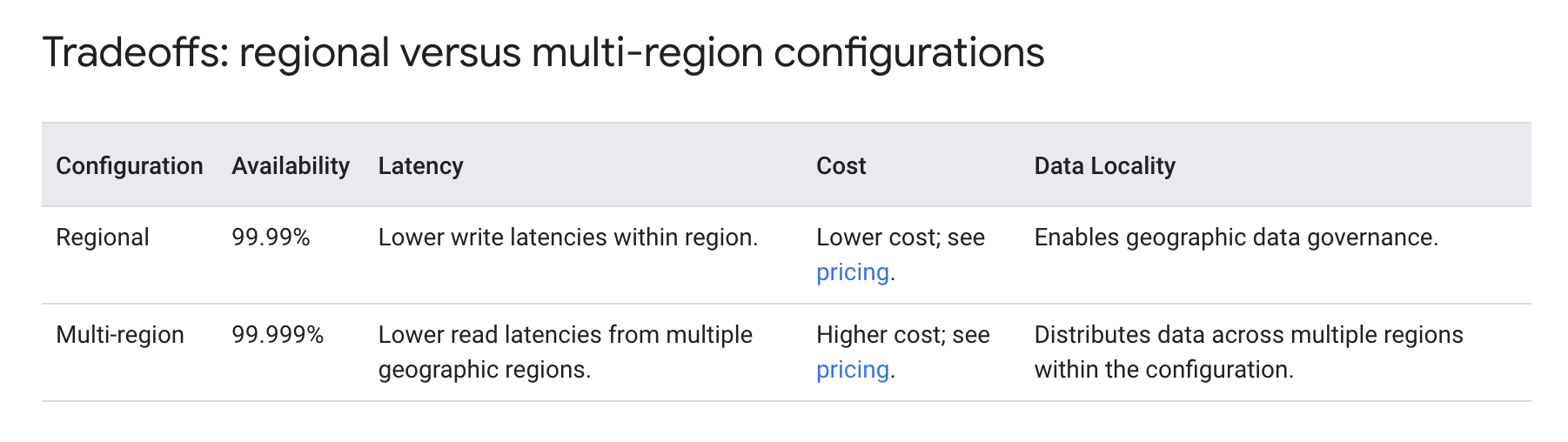

Regional vs Multi-Regional

- Regional Configuration

- Cloud Spanner maintains 3 read-write replicas, each within a different Google Cloud zone in that region.

- Each read-write replica contains a full copy of the operational database that is able to serve read-write and read-only requests.

- Cloud Spanner uses replicas in different zones so that if a single-zone failure occurs, the database remains available.

- Every Cloud Spanner mutation requires a write quorum that’s composed of a majority of voting replicas. Write quorums are formed from two out of the three replicas in regional configurations.

- Provides 99.99% availability

- Multi-Regional Configuration

- Multi-region configurations allow replicating the database’s data not just in multiple zones, but in multiple zones across multiple regions

- Additional replicas enable reading data with low latency from multiple locations close to or within the regions in the configuration.

- As the quorum (read-write) replicas are spread across more than one region, additional network latency is incurred when these replicas communicate with each other to vote on writes.

- Multi-region configurations enable the application to achieve faster reads in more places at the cost of a small increase in write latency.

- Provides 99.999% availability

- Multi-regional makes use of, paxos based replication, TrueTime and leader election, to provide global consistency and higher availability

Replication

- Cloud Spanner automatically gets replication at the byte level from the underlying distributed filesystem.

- Cloud Spanner also performs data replication to provide global availability and geographic locality, with fail-over between replicas being transparent to the client.

- Cloud Spanner creates multiple copies, or “replicas,” of the rows, then stores these replicas in different geographic areas.

- Cloud Spanner uses a synchronous, Paxos distributed consensus protocol, in which voting replicas take a vote on every write request to ensure transactions are available in sufficient replicas before being committed.

- Globally synchronous replication gives the ability to read the most up-to-date data from any Cloud Spanner read-write or read-only replica.

- Cloud Spanner creates replicas of each database split

- A split holds a range of contiguous rows, where the rows are ordered by the primary key.

- All of the data in a split is physically stored together in the replica, and Cloud Spanner serves each replica out of an independent failure zone.

- A set of splits is stored and replicated using Paxos.

- Within each Paxos replica set, one replica is elected to act as the leader.

- Leader replicas are responsible for handling writes, while any read-write or read-only replica can serve a read request without communicating with the leader (though if a strong read is requested, the leader will typically be consulted to ensure that the read-only replica has received all recent mutations)

- Cloud Spanner automatically reshards data into splits and automatically migrates data across machines (even across datacenters) to balance load, and in response to failures.

- Spanner’s sharding considers the parent child relationships in interleaved tables and related data is migrated together to preserve query performance

Cloud Spanner Data Model

- A Cloud Spanner Instance can contain one or more databases

- A Cloud Spanner database can contain one or more tables

- Tables look like relational database tables in that they are structured with rows, columns, and values, and they contain primary keys

- Every table must have a primary key, and that primary key can be composed of zero or more columns of that table

- Parent-child relationships in Cloud Spanner

- Table Interleaving

- Table interleaving is a good choice for many parent-child relationships where the child table’s primary key includes the parent table’s primary key columns

- Child rows are colocated with the parent rows significantly improving the performance

- Primary key column(s) of the parent table must be the prefix of the primary key of the child table

- Foreign Keys

- Foreign keys are similar to traditional databases.

- They are not limited to primary key columns, and tables can have multiple foreign key relationships, both as a parent in some relationships and a child in others.

- The foreign key relationship does not guarantee data co-location

- Table Interleaving

- Cloud Spanner automatically creates an index for each table’s primary key

- Secondary indexes can be created for other columns

Cloud Spanner Scaling

- Increase the compute capacity of the instance to scale up the server and storage resources in the instance.

- Each node allows for an additional 2TB of data storage

- Nodes provide additional compute resources to increase throughput

- Increasing compute capacity does not increase the replica count but gives each replica more CPU and RAM, which increases the replica’s throughput (that is, more reads and writes per second can occur).

Cloud Spanner Backup & PITR

- Cloud Spanner Backup and Restore helps create backups of Cloud Spanner databases on demand, and restore them to provide protection against operator and application errors that result in logical data corruption.

- Backups are highly available, encrypted, and can be retained for up to a year from the time they are created.

- Cloud Spanner point-in-time recovery (PITR) provides protection against accidental deletion or writes.

- PITR works by letting you configure a database’s

version_retention_periodto retain all versions of data and schema, from a minimum of 1 hour up to a maximum of 7 days.

Cloud Spanner Best Practices

- Design a schema that prevents hotspots and other performance issues.

- For optimal write latency, place compute resources for write-heavy workloads within or close to the default leader region.

- For optimal read performance outside of the default leader region, use staleness of at least 15 seconds.

- To avoid single-region dependency for the workloads, place critical compute resources in at least two regions.

- Provision enough compute capacity to keep high priority total CPU utilization under

- 65% in each region for regional configuration

- 45% in each region for multi-regional configuration

GCP Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- GCP services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- GCP exam questions are not updated to keep up the pace with GCP updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- Your customer has implemented a solution that uses Cloud Spanner and notices some read latency-related performance issues on one table. This table is accessed only by their users using a primary key. The table schema is shown below. You want to resolve the issue. What should you do?

- Remove the profile_picture field from the table.

- Add a secondary index on the person_id column.

- Change the primary key to not have monotonically increasing values.

- Create a secondary index using the following Data Definition Language (DDL) CREATE INDEX person_id_ix ON Persons (

person_id, firstname, lastname ) STORING ( profile_picture )

- You are building an application that stores relational data from users. Users across the globe will use this application. Your CTO is concerned about the scaling requirements because the size of the user base is unknown. You need to implement a database solution that can scale with your user growth with minimum configuration changes. Which storage solution should you use?

- Cloud SQL

- Cloud Spanner

- Cloud Firestore

- Cloud Datastore

- A financial organization wishes to develop a global application to store transactions happening from different part of the world. The storage system must provide low latency transaction support and horizontal scaling. Which GCP service is appropriate for this use case?

- Bigtable

B Datastore

C Cloud Storage

D Cloud Spanner

- Bigtable