AWS Data Pipeline

- AWS Data Pipeline is a web service that makes it easy to automate and schedule regular data movement and data processing activities in AWS

- helps define data-driven workflows

- integrates with on-premises and cloud-based storage systems

- helps quickly define a pipeline, which defines a dependent chain of data sources, destinations, and predefined or custom data processing activities

- supports scheduling where the pipeline regularly performs processing activities such as distributed data copy, SQL transforms, EMR applications, or custom scripts against destinations such as S3, RDS, or DynamoDB.

- ensures that the pipelines are robust and highly available by executing the scheduling, retry, and failure logic for the workflows as a highly scalable and fully managed service.

AWS Data Pipeline features

- Distributed, fault-tolerant, and highly available

- Managed workflow orchestration service for data-driven workflows

- Infrastructure management service, as it will provision and terminate resources as required

- Provides dependency resolution

- Can be scheduled

- Supports Preconditions for readiness checks.

- Grants control over retries, including frequency and number

- Native integration with S3, DynamoDB, RDS, EMR, EC2 and Redshift

- Support for both AWS based and external on-premise resources

AWS Data Pipeline Concepts

Pipeline Definition

- Pipeline definition helps the business logic to be communicated to the AWS Data Pipeline

- Pipeline definition defines the location of data (Data Nodes), activities to be performed, the schedule, resources to run the activities, per-conditions, and actions to be performed

Pipeline Components, Instances, and Attempts

- Pipeline components represent the business logic of the pipeline and are represented by the different sections of a pipeline definition.

- Pipeline components specify the data sources, activities, schedule, and preconditions of the workflow

- When AWS Data Pipeline runs a pipeline, it compiles the pipeline components to create a set of actionable instances and contains all the information needed to perform a specific task

- Data Pipeline provides durable and robust data management as it retries a failed operation depending on frequency & defined number of retries

Task Runners

- A task runner is an application that polls AWS Data Pipeline for tasks and then performs those tasks

- When Task Runner is installed and configured,

- it polls AWS Data Pipeline for tasks associated with activated pipelines

- after a task is assigned to Task Runner, it performs that task and reports its status back to Pipeline.

- A task is a discreet unit of work that the Pipeline service shares with a task runner and differs from a pipeline, which defines activities and resources that usually yields several tasks

- Tasks can be executed either on the AWS Data Pipeline managed or user-managed resources.

Data Nodes

- Data Node defines the location and type of data that a pipeline activity uses as source (input) or destination (output)

- supports S3, Redshift, DynamoDB, and SQL data nodes

Databases

-

supports JDBC, RDS, and Redshift database

Activities

- An activity is a pipeline component that defines the work to perform

- Data Pipeline provides pre-defined activities for common scenarios like sql transformation, data movement, hive queries, etc

- Activities are extensible and can be used to run own custom scripts to support endless combinations

Preconditions

- Precondition is a pipeline component containing conditional statements that must be satisfied (evaluated to True) before an activity can run

- A pipeline supports

- System-managed preconditions

- are run by the AWS Data Pipeline web service on your behalf and do not require a computational resource

- Includes source data and keys check for e.g. DynamoDB data, table exists or S3 key exists or prefix not empty

- User-managed preconditions

- run on user defined and managed computational resources

- Can be defined as Exists check or Shell command

- System-managed preconditions

Resources

- A resource is a computational resource that performs the work that a pipeline activity specifies

- supports AWS Data Pipeline-managed and self-managed resources

- AWS Data Pipeline-managed resources include EC2 and EMR, which are launched by the Data Pipeline service only when they’re needed

- Self managed on-premises resources can also be used, where a Task Runner package is installed which continuously polls the AWS Data Pipeline service for work to perform

- Resources can run in the same region as their working data set or even on a region different than AWS Data Pipeline

- Resources launched by AWS Data Pipeline are counted within the resource limits and should be taken into account

Actions

- Actions are steps that a pipeline takes when a certain event like success, or failure occurs.

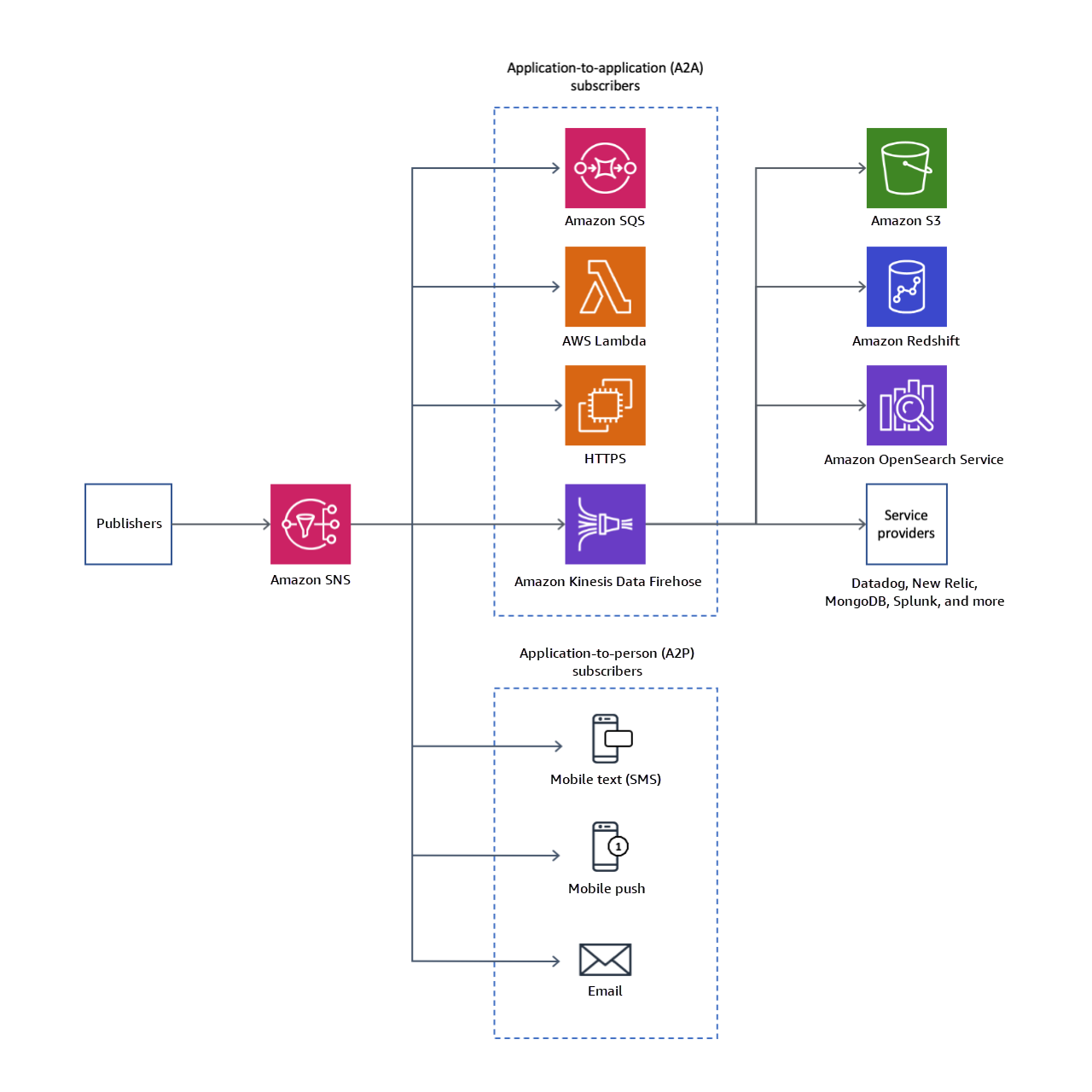

- Pipeline supports SNS notifications and termination action on resources

AWS Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- AWS services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- AWS exam questions are not updated to keep up the pace with AWS updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- An International company has deployed a multi-tier web application that relies on DynamoDB in a single region. For regulatory reasons they need disaster recovery capability in a separate region with a Recovery Time Objective of 2 hours and a Recovery Point Objective of 24 hours. They should synchronize their data on a regular basis and be able to provision the web application rapidly using CloudFormation. The objective is to minimize changes to the existing web application, control the throughput of DynamoDB used for the synchronization of data and synchronize only the modified elements. Which design would you choose to meet these requirements?

- Use AWS data Pipeline to schedule a DynamoDB cross region copy once a day. Create a ‘Lastupdated’ attribute in your DynamoDB table that would represent the timestamp of the last update and use it as a filter. (Refer Blog Post)

- Use EMR and write a custom script to retrieve data from DynamoDB in the current region using a SCAN operation and push it to DynamoDB in the second region. (No Schedule and throughput control)

- Use AWS data Pipeline to schedule an export of the DynamoDB table to S3 in the current region once a day then schedule another task immediately after it that will import data from S3 to DynamoDB in the other region. (With AWS Data pipeline the data can be copied directly to other DynamoDB table)

- Send each item into an SQS queue in the second region; use an auto-scaling group behind the SQS queue to replay the write in the second region. (Not Automated to replay the write)

- Your company produces customer commissioned one-of-a-kind skiing helmets combining nigh fashion with custom technical enhancements. Customers can show off their Individuality on the ski slopes and have access to head-up-displays, GPS rear-view cams and any other technical innovation they wish to embed in the helmet. The current manufacturing process is data rich and complex including assessments to ensure that the custom electronics and materials used to assemble the helmets are to the highest standards. Assessments are a mixture of human and automated assessments you need to add a new set of assessment to model the failure modes of the custom electronics using GPUs with CUD across a cluster of servers with low latency networking. What architecture would allow you to automate the existing process using a hybrid approach and ensure that the architecture can support the evolution of processes over time?

- Use AWS Data Pipeline to manage movement of data & meta-data and assessments. Use an auto-scaling group of G2 instances in a placement group. (Involves mixture of human assessments)

- Use Amazon Simple Workflow (SWF) to manage assessments, movement of data & meta-data. Use an autoscaling group of G2 instances in a placement group. (Human and automated assessments with GPU and low latency networking)

- Use Amazon Simple Workflow (SWF) to manage assessments movement of data & meta-data. Use an autoscaling group of C3 instances with SR-IOV (Single Root I/O Virtualization). (C3 and SR-IOV won’t provide GPU as well as Enhanced networking needs to be enabled)

- Use AWS data Pipeline to manage movement of data & meta-data and assessments use auto-scaling group of C3 with SR-IOV (Single Root I/O virtualization). (Involves mixture of human assessments)