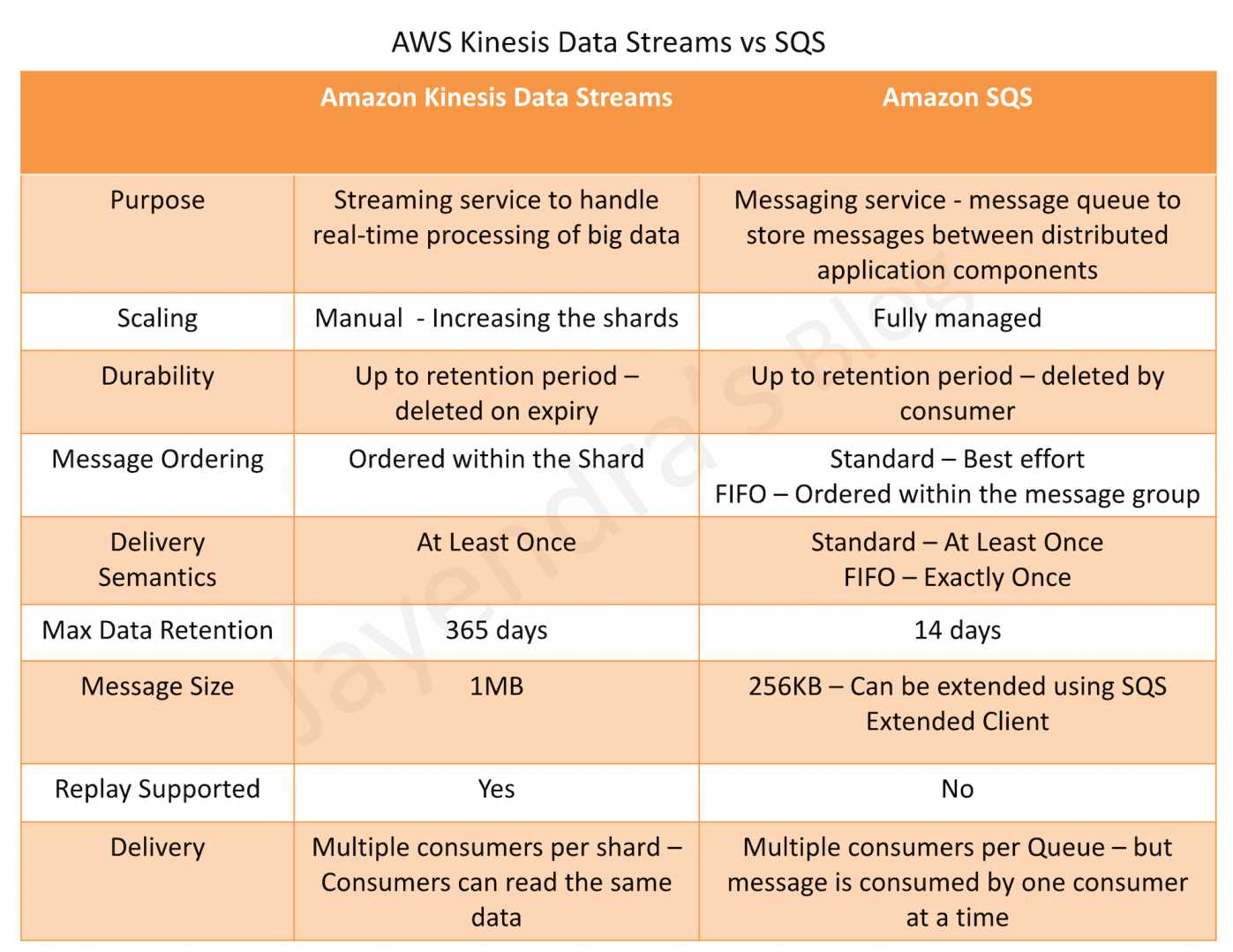

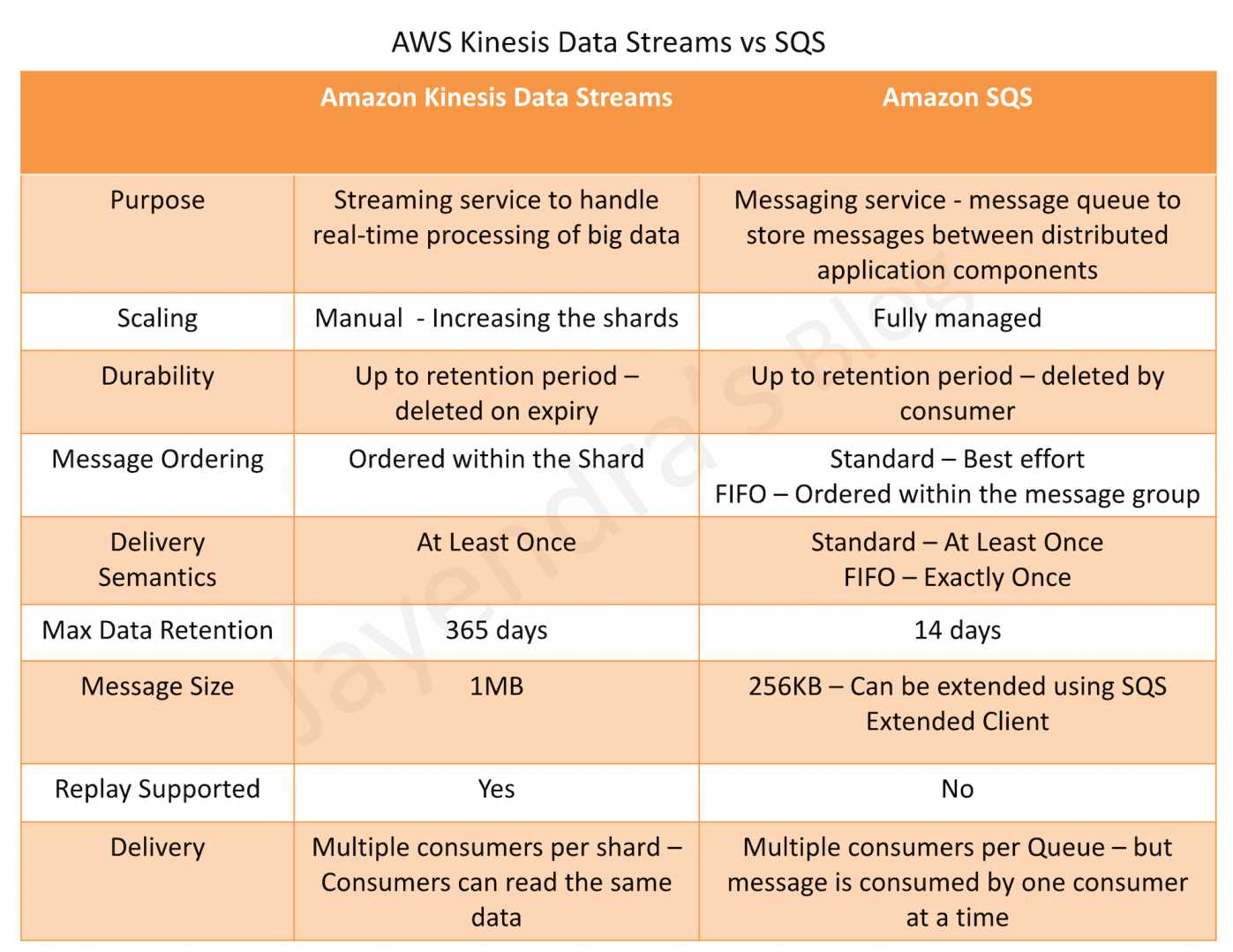

Kinesis Data Streams vs SQS

Purpose

- Amazon Kinesis Data Streams

- allows real-time processing of streaming big data and the ability to read and replay records to multiple Amazon Kinesis Applications.

- Amazon Kinesis Client Library (KCL) delivers all records for a given partition key to the same record processor, making it easier to build multiple applications that read from the same Amazon Kinesis stream (for example, to perform counting, aggregation, and filtering).

- Amazon SQS

- offers a reliable, highly-scalable hosted queue for storing messages as they travel between applications or microservices.

- It moves data between distributed application components and helps decouple these components.

- provides common middleware constructs such as dead-letter queues and poison-pill management.

- provides a generic web services API and can be accessed by any programming language that the AWS SDK supports.

- supports both standard and FIFO queues

Scaling

- Kinesis Data streams is not fully managed and requires manual provisioning and scaling by increasing shards

- SQS is fully managed, highly scalable and requires no administrative overhead and little configuration

Ordering

- Kinesis provides ordering of records, as well as the ability to read and/or replay records in the same order to multiple Kinesis Applications

- SQS Standard Queue does not guarantee data ordering and provides at least once delivery of messages

- SQS FIFO Queue guarantees data ordering within the message group

Data Retention Period

- Kinesis Data Streams stores the data for up to 24 hours, by default, and can be extended to 365 days

- SQS stores the message for up to 4 days, by default, and can be configured from 1 minute to 14 days but clears the message once deleted by the consumer

Delivery Semantics

- Kinesis and SQS Standard Queue both guarantee at least one delivery of the message.

- SQS FIFO Queue guarantees Exactly once delivery

Parallel Clients

- Kinesis supports multiple consumers

- SQS allows the messages to be delivered to only one consumer at a time and requires multiple queues to deliver messages to multiple consumers

Use Cases

- Kinesis use cases requirements

- Ordering of records.

- Ability to consume records in the same order a few hours later

- Ability for multiple applications to consume the same stream concurrently

- Routing related records to the same record processor (as in streaming MapReduce)

- SQS uses cases requirements

- Messaging semantics like message-level ack/fail and visibility timeout

- Leveraging SQS’s ability to scale transparently

- Dynamically increasing concurrency/throughput at read time

- Individual message delay, which can be delayed

AWS Certification Exam Practice Questions

- Questions are collected from Internet and the answers are marked as per my knowledge and understanding (which might differ with yours).

- AWS services are updated everyday and both the answers and questions might be outdated soon, so research accordingly.

- AWS exam questions are not updated to keep up the pace with AWS updates, so even if the underlying feature has changed the question might not be updated

- Open to further feedback, discussion and correction.

- You are deploying an application to track GPS coordinates of delivery trucks in the United States. Coordinates are transmitted from each delivery truck once every three seconds. You need to design an architecture that will enable real-time processing of these coordinates from multiple consumers. Which service should you use to implement data ingestion?

- Amazon Kinesis

- AWS Data Pipeline

- Amazon AppStream

- Amazon Simple Queue Service

- Your customer is willing to consolidate their log streams (access logs, application logs, security logs etc.) in one single system. Once consolidated, the customer wants to analyze these logs in real time based on heuristics. From time to time, the customer needs to validate heuristics, which requires going back to data samples extracted from the last 12 hours? What is the best approach to meet your customer’s requirements?

- Send all the log events to Amazon SQS. Setup an Auto Scaling group of EC2 servers to consume the logs and apply the heuristics.

- Send all the log events to Amazon Kinesis develop a client process to apply heuristics on the logs (Can perform real time analysis and stores data for 24 hours which can be extended to 7 days)

- Configure Amazon CloudTrail to receive custom logs, use EMR to apply heuristics the logs (CloudTrail is only for auditing)

- Setup an Auto Scaling group of EC2 syslogd servers, store the logs on S3 use EMR to apply heuristics on the logs (EMR is for batch analysis)

References

Kinesis Data Streams – Comparision with other services