Clearing the AWS Certified Big Data – Speciality (BDS-C00) was a great feeling. This was my third Speciality certification and in terms of the difficulty level (compared to Network and Security Speciality exams), I would rate it between Network (being the toughest) Security (being the simpler one).

Big Data in itself is a very vast topic and with AWS services, there is lots to cover and know for the exam. If you have worked on Big Data technologies including a bit of Visualization and Machine learning, it would be a great asset to pass this exam.

AWS Certified Big Data – Speciality (BDS-C00) exam basically validates

- Implement core AWS Big Data services according to basic architectural best practices

- Design and maintain Big Data

- Leverage tools to automate Data Analysis

Refer AWS Certified Big Data – Speciality Exam Guide for details

AWS Certified Big Data – Speciality (BDS-C00) Exam Summary

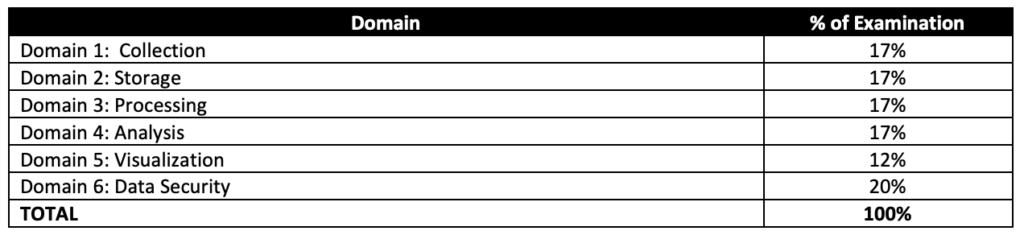

- AWS Certified Big Data – Speciality exam, as its name suggests, covers a lot of Big Data concepts right from data transfer and collection techniques, storage, pre and post processing, analytics, visualization with the added concepts for data security at each layer.

- One of the key tactic I followed when solving any AWS Certification exam is to read the question and use paper and pencil to draw a rough architecture and focus on the areas that you need to improve. Trust me, you will be able to eliminate 2 answers for sure and then need to focus on only the other two. Read the other 2 answers to check the difference area and that would help you reach to the right answer or atleast have a 50% chance of getting it right.

- Be sure to cover the following topics

- Whitepapers and articles

- AWS Analytics Services Cheat Sheet

- Data Transfer Options – Need to be clear for uses cases for VPN vs Direct Connect vs Snowball

- Analytics

- Make sure you know and cover all the services in depth, as 80% of the exam is focused on these topics

- Elastic Map Reduce

- Understand EMR in depth

- Understand EMRFS (hint: Use Consistent view to make sure S3 objects referred by different applications are in sync)

- Know EMR Best Practices (hint: start with many small nodes instead on few large nodes)

- Know Hive can be externally hosted using RDS, Aurora and AWS Glue Data Catalog

- Know also different technologies

- Presto is a fast SQL query engine designed for interactive analytic queries over large datasets from multiple sources

- D3.js is a JavaScript library for manipulating documents based on data. D3 helps you bring data to life using HTML, SVG, and CSS

- Spark is a distributed processing framework and programming model that helps do machine learning, stream processing, or graph analytics using Amazon EMR clusters

- Zeppelin/Jupyter as a notebook for interactive data exploration and provides open-source web application that can be used to create and share documents that contain live code, equations, visualizations, and narrative text

- Phoenix is used for OLTP and operational analytics, allowing you to use standard SQL queries and JDBC APIs to work with an Apache HBase backing store

- Kinesis

- Understand Kinesis Data Streams and Kinesis Data Firehose in depth

- Know Kinesis Data Streams vs Kinesis Firehose

- Know Kinesis Data Streams is open ended on both producer and consumer. It supports KCL and works with Spark.

- Know Kineses Firehose is open ended for producer only. Data is stored in S3, Redshift and ElasticSearch.

- Kinesis Firehose works in batches with minimum 60secs interval.

- Understand Kinesis Encryption (hint: use server side encryption or encrypt in producer for data streams)

- Know difference between KPL vs SDK (hint: PutRecords are synchronously, while KPL supports batching)

- Kinesis Best Practices (hint: increase performance increasing the shards)

- Know ElasticSearch is a search service which supports indexing, full text search, faceting etc.

- Redshift

- Understand Redshift in depth

- Understand Redshift Advance topics like Workload Management, Distribution Style, Sort key

- Know Redshift Best Practices w.r.t selection of Distribution style, Sort key, COPY command which allows parallelism

- Know Redshift views to control access to data.

- Amazon Machine Learning

- Know difference in algorithms esp. Binary classification vs Multiclass vs Regression

- Know Data Pipeline for data transfer

- QuickSight

- Know Visual Types (hint: esp. plotting line, bar and story based visualizations)

- Know Supported Data Sources (hint: supports files)

- Know Glue as the ETL tool

- Security, Identity & Compliance

- Data security is a key concept controlled in the Big Data – Speciality exam

- Identity and Access Management (IAM)

- Understand IAM in depth

- Understand IAM Roles

- Understand Identity Providers & Federation (hint: restrict access based on assumed role)

- Understand IAM Policies

- Deep dive into Key Management Service (KMS). There would be quite a few questions on this.

- Understand how KMS works

- Understand IAM Policies, Key Policies, Grants

- Know KMS are regional and how to use in other regions.

- Understand AWS Cognito esp. authentication across devices

- Management & Governance Tools

- Understand AWS CloudWatch for Logs and Metrics. Also, CloudWatch Events more real time alerts as compared to CloudTrail

- Storage

- Data Storage Options – Know patterns for S3 vs RDS vs DynamoDB vs Redshift

- Simple Storage Service

- Know S3 Data Protection

- Know S3 Access Control (hint: ACLs for fine grained access control)

- DynamoDB

- Know DynamoDB

- Know DynamoDB Secondary Indexes

- Know DynamoDB security (hint: allows fine grained access control)

- Know DynamoDB Accelerator (DAX) for caching

- Compute

- Know EC2 access to services using IAM Role and Lambda using Execution role.

- Lambda esp. how to improve performance batching, breaking functions etc.

- Whitepapers and articles

AWS Certified Big Data – Speciality (BDS-C00) Exam Resources

- Online Courses

- Stephane Maarek – AWS Certified Big Data Specialty Exam – In Depth & Hands On [Recommended]

- Linux Academy – AWS Certified Big Data Specialty course

- Practice tests

- Braincert – AWS Certified Big Data – Speciality BDS-C00 Practice Exams [Recommended]